Use AI on your matters. Without exposing client data.

A private Super Brain for your contracts, pleadings, deposition transcripts, discovery, and case notes. Indexing stays local on your Mac. Cloud AI requests route through a zero-retention proxy, and common personal information is redacted on-device before it leaves.

No credit card required · GPT-5, Claude & Gemini · Offline via Ollama

When your matters involve

This isn't the kind of data you casually paste into ChatGPT.

But without AI, you're stuck reading everything line by line.

How Elephas works with your matters

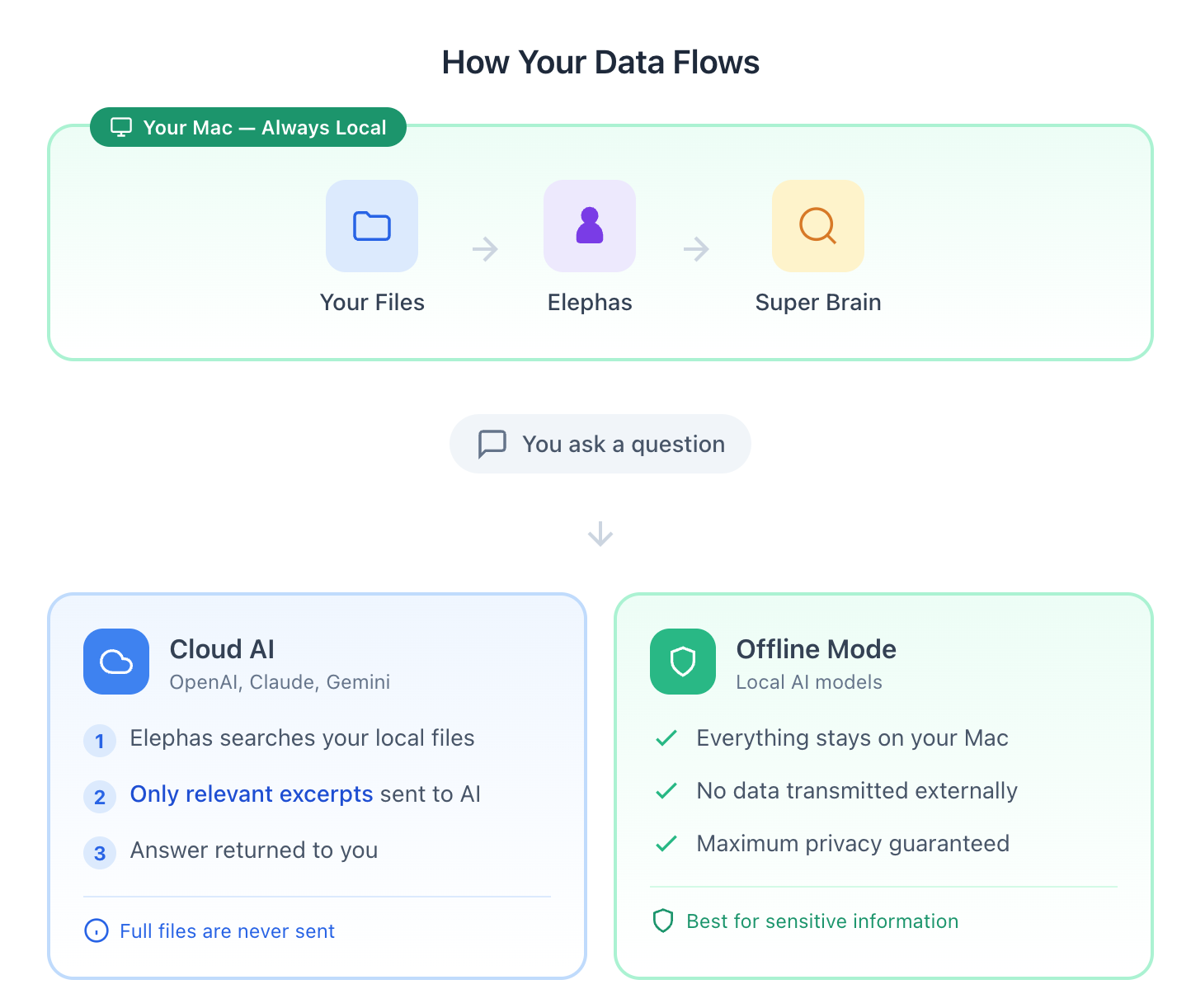

Indexing stays on your Mac. Redaction runs on-device. You choose whether inference happens locally, through the zero-retention proxy, or on your firm's own API keys.

Drop your matter files into a Super Brain

Create a dedicated Super Brain per matter or client. Add PDFs, contracts, depositions, discovery, and case notes. Indexed entirely on your Mac.

Elephas indexes locally and redacts on-device

Common personal information and structured identifiers are replaced with placeholder tokens before anything reaches a cloud model. Your full documents and most metadata are never sent.

Pick the mode that fits the matter

Run offline for privileged work, use cloud AI through the zero-retention proxy for general drafting and research, or bring your firm's own API key to take the proxy out of the data path entirely.

Local indexing

Matter files stay on your Mac. Nothing is uploaded to set up the Super Brain.

Zero-retention

Cloud requests route to providers with zero data retention enabled.

Your choice

Offline, cloud via proxy, or bring your own API key.

The private ChatGPT for lawyers

ChatGPT may train on your prompts unless you pay enterprise. Elephas runs Smart Redaction on your Mac first, then routes through a zero-retention proxy. You still get GPT-5 or Claude quality on contracts and pleadings, without sending raw client data.

AI contract review without leaking client data

Drop executed agreements into a per-matter Super Brain. Get obligations tables, renewal dates, and termination triggers, with paragraph-level citations back to your own files. Smart Redaction strips identifiers before any cloud call.

AI legal document review and analysis

Load a 400-page deposition or the full discovery production. Ask for a timeline of admissions, contradictions across witnesses, or every paragraph that mentions a term. Elephas searches the local index and cites the page.

Elephas vs Harvey AI, Spellbook, and ChatGPT

Honest comparison of the tools solo and small-firm attorneys actually weigh against each other.

| Elephas | Harvey AI | Spellbook | ChatGPT (consumer) | |

|---|---|---|---|---|

| Solo and small-firm pricing | Yes | Enterprise only | Mid-market | Yes |

| Mac-native app | Yes | Web | Word add-in | Web and desktop |

| Redacts client data before sending to cloud LLM | Yes, on-device | N/A (cloud-only) | N/A | No |

| Works fully offline with a local model | Yes | No | No | No |

| Bring your own OpenAI or Anthropic key | Yes | No | No | N/A |

Comparison reflects published feature sets as of 2026. Harvey targets BigLaw. Spellbook focuses on contract drafting inside Word. Elephas is the option when you need privacy controls without a procurement cycle.

Diligence, answered

The three questions every attorney asks

Before your first privileged document touches an AI tool, these are the answers you want in writing. Here they are.

Does a third-party model provider retain or train on my data?

No. Cloud AI requests routed through the Elephas reverse proxy only go to providers with zero data retention enabled. Providers do not log, store, or train on your question or the passages sent with it. The request is processed in memory and the response is returned.

Using Bring Your Own Key? Requests go directly to the provider on your own account, so retention follows the policy on that account.

How long are uploaded matter files, chats, and outputs retained?

Your matter files and indexed content stay on your Mac. Elephas does not store your document content, search index, or chat history on its own servers.

When you use cloud AI, only the question text and the short excerpts needed to answer it are sent. Your full documents and most metadata stay on your device.

On sync: iCloud sync is on by default and keeps your data inside your own Apple iCloud account, never on Elephas servers. Turn it off in Preferences → General to keep everything on the device. See the iCloud FAQ below.

Can I request deletion of matter files and related data at any time?

Yes. Your matter files live on your Mac, so you remove them by deleting them locally. For account data and any related records, you can request deletion at any time by emailing support@elephas.app.

Doing formal vendor diligence for your firm? Write to the same address. We will respond directly rather than hand you a generic policy link.

Three ways to run AI. You pick the mode for the task.

Indexing is always local. Inference is your choice, per Super Brain.

Local model on your Mac

Use a local model via Ollama. Both indexing and inference stay on your device. Nothing leaves your Mac.

When to use it

Privileged discovery, attorney work-product, matters where your policy requires the least possible exposure.

Default cloud mode

Requests route through an Elephas reverse proxy to providers configured with zero data retention. Providers do not log, store, or train on the content. Smart Redaction anonymizes common personal information on-device before the request is sent.

When to use it

Drafting, summarization, research, and analysis where redaction-first processing fits the task.

Your firm's AI account

Connect your firm's OpenAI, Anthropic, or Google API key. Requests go straight from your Mac to the provider with no intermediary. Retention follows the policy on your own account.

When to use it

Firms with existing enterprise terms, or attorneys who want to remove the Elephas proxy from the data path entirely.

Smart Redaction

Protection before processing, for legal work

When you use cloud AI, Smart Redaction scans outgoing queries and retrieved passages on your Mac and replaces common personal information with placeholder tokens before anything reaches the provider.

Coverage is strongest when sensitive details are common personal information or structured identifiers with clear patterns or labels. It is designed to reduce exposure, not to guarantee complete detection. For maximum control, pair it with offline mode.

Read the full coverage and caveatsWhat's covered for legal and policy work

- Case numbers

- Docket and matter references when labeled

- Client and contact details: names, emails, phone numbers, addresses

- Links to private portals and case-management systems

- IP addresses in logs and audit trails

Honest note: pattern matching catches common, structured identifiers. It does not guarantee every sensitive sentence in free-form narrative will be redacted. For the most sensitive matters, run offline.

What it looks like in practice

Four workflows small-firm attorneys run in Elephas every week.

Summarize a 60-page deposition

Drop the transcript into a Super Brain. Ask for a timeline, a list of admissions, and key contradictions. Party names and identifiers are redacted before the summary runs.

Draft a demand letter grounded in the actual case file

Elephas retrieves the relevant paragraphs from your pleadings, exhibits, and correspondence, and drafts with paragraph-level citations back to your own files.

Find the exhibit across 400 pages of discovery

Ask in plain English: "Which exhibit mentions the termination clause?" Elephas searches the local index and cites the page and paragraph.

Extract key dates, parties, and obligations from a contract stack

Load the executed agreements into a Super Brain. Ask for a structured obligations table, renewal dates, and termination triggers across all of them at once.

Not another chatbot

Generic AI tools were built for open prompts on the open web. Your work is neither.

Generic AI chat

- × Consumer prompts are often used to train future models.

- × No ground truth. The model confidently invents a citation and you have to catch it.

- × Cannot see your actual matter files. You paste in excerpts and hope for the best.

Elephas

- Default cloud requests route to providers with zero data retention. No training on your content.

- Answers cite the paragraph from your own case file, so you can verify in one click.

- Indexed locally. Elephas works against your matter files without uploading them.

Pro+ is built for real work

Full control over your data

Pro+ adds the full redaction inspector, privacy controls, offline workflows, and priority performance. It is for attorneys whose work is sensitive by default.

- See exactly what was redacted, where, and why

- Choose local vs cloud per Super Brain

- Offline workflows for privileged matters

Questions attorneys ask before they install

Straight answers, with links to the underlying docs.

-

Consumer ChatGPT may use your inputs to improve future models, and ChatGPT Enterprise solves that only at enterprise pricing. Elephas routes cloud requests through a zero-retention proxy and runs Smart Redaction on your Mac first, so client names, party identifiers, and other common personal information are replaced with placeholder tokens before anything reaches OpenAI, Anthropic, or Google. For privileged work, run a local model and nothing leaves the device.

-

It depends on the tool. With Elephas, contracts are indexed locally on your Mac, common personal information is redacted on-device before any cloud call, and cloud requests go to providers with zero data retention enabled. Your full contract content stays on your device. For agreements that you cannot risk having logged externally, run a local model.

-

Different audience and architecture. Harvey is enterprise legal AI sold to BigLaw and in-house teams, runs in the cloud, and requires firm-level procurement. Elephas is a Mac-native private knowledge workspace for solo and small-firm attorneys. Indexing stays on your device, redaction runs locally, and cloud requests route through a zero-retention proxy. You can also bring your own OpenAI or Anthropic key.

-

Elephas does not certify compliance with any specific rule. Rule 1.6 obligations stay with you. What Elephas provides are three controls that make those obligations easier to meet: local indexing keeps client information on your Mac, on-device redaction reduces what is sent to any cloud model, and offline mode lets you process privileged work without any external request at all. For the most sensitive matters, run offline.

-

No. Elephas does not train any models on your data. Cloud AI requests routed through our reverse proxy only go to providers with zero data retention enabled, so providers do not log, store, or train on your question or the passages sent with it.

-

Yes. Your matter files and the search index live on your Mac, so you can delete them locally at any time. For account data, email support@elephas.app and we will remove it.

-

Elephas is not HIPAA or SOC 2 certified today. The architecture is local-first: your matter files stay on your Mac, and Elephas servers do not store your content. If your firm needs to evaluate this for regulated work, email support@elephas.app and we will walk through the data flow with you.

-

No. Elephas does not store your document content, search index, or chat history on its own servers. Your indexed data stays on your Mac. When you use cloud AI, only the question text and the short excerpts needed to answer it are sent, never your full documents. See the next question for how Apple's iCloud and CloudKit fit in.

-

Yes. Elephas uses Apple's iCloud for multi-device sync, and all of it stays inside your own Apple iCloud account, never on Elephas servers.

Two Apple services are used when sync is on: CloudKit mirrors app data (your Super Brains and chat records) across your Mac, iPhone, and iPad, and iCloud Drive syncs the indexed matter-file content. A single switch controls both.

Sync is on by default. Turn it off in

Elephas › Preferences › Generaland nothing syncs anywhere. Data stays on the device. If your Apple ID has Advanced Data Protection enabled, Apple stores CloudKit and iCloud Drive data with end-to-end encryption that only your devices can decrypt. -

Yes. Bring Your Own Key sends requests directly from your Mac to the provider using your own API account, so retention follows the policy on that account. This removes the Elephas proxy from the data path entirely. Configure it in Settings under AI Provider.

Sensitive work deserves a better default

Use AI on your matters with more control and less exposure

Local-first indexing, on-device redaction, and a zero-retention proxy by default. Pick the mode that fits the matter.

Doing formal vendor diligence for your firm? Contact us.