Is ChatGPT Safe for Confidential Documents? Here's the Reality

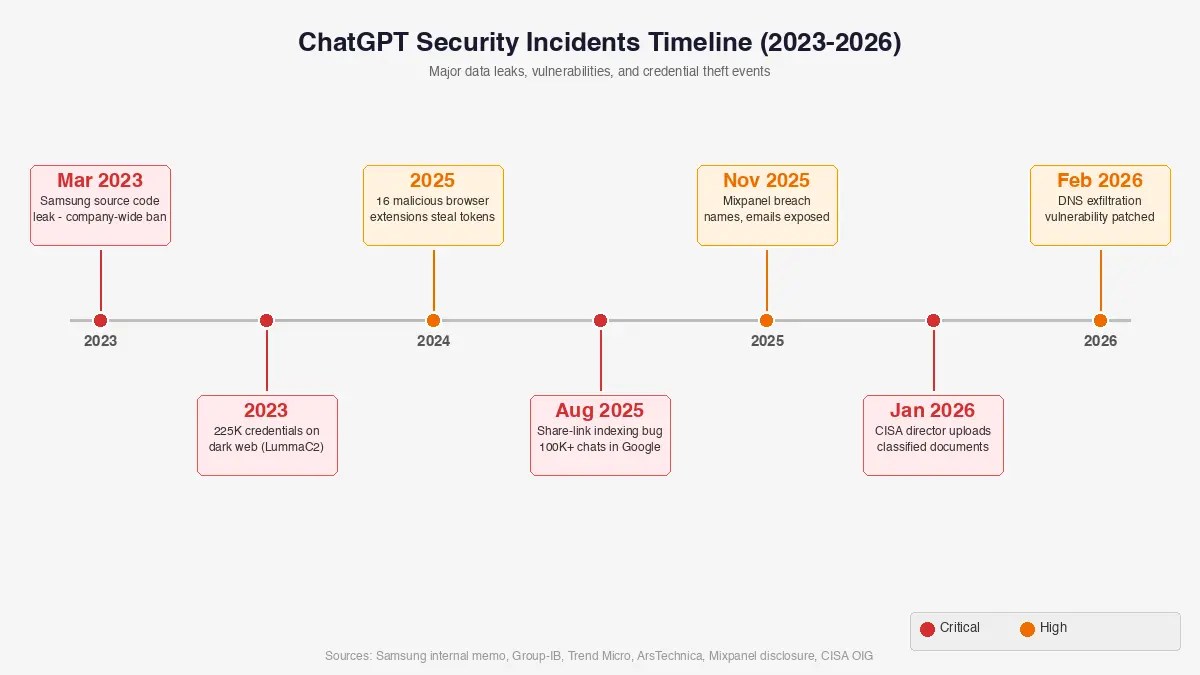

In early 2026, news broke that the acting director of CISA, the agency responsible for protecting America's critical infrastructure, had uploaded government documents marked “For Official Use Only” to the public version of ChatGPT during the summer of 2025.

The person whose entire job is protecting the country from cyber threats used a consumer AI tool for sensitive government material. If the head of U.S. cybersecurity can make that mistake, the question is no longer whether ChatGPT is safe for confidential documents. It is how much damage is already done.

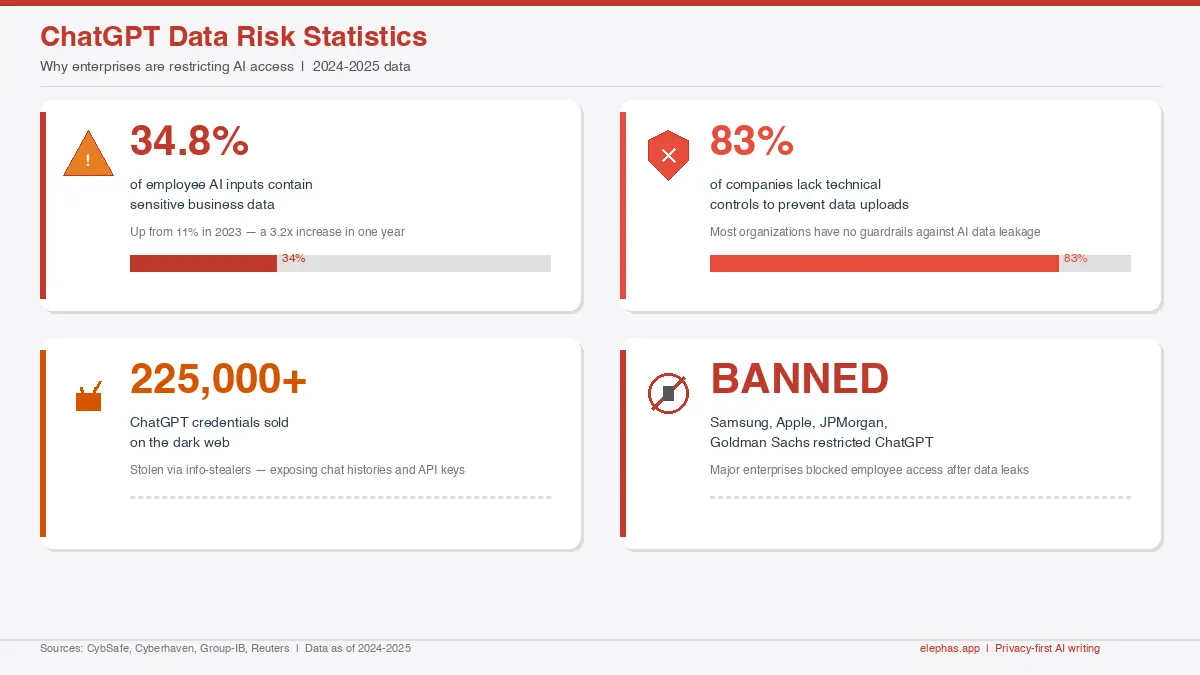

34.8%

of employee AI inputs contain sensitive data

83%

of companies lack controls to prevent data uploads

225K+

ChatGPT credentials sold on the dark web

BANNED

Samsung, Apple, JPMorgan restricted ChatGPT

Executive Summary

- Sensitive data now makes up 34.8% of all employee inputs to AI tools like ChatGPT, up from 10.7% in 2023, according to Cyberhaven's 2025 AI Adoption and Risk Report.

- Major companies including Samsung, Apple, JPMorgan, and Goldman Sachs have banned or restricted ChatGPT use after data leak incidents.

- OpenAI's consumer ChatGPT uses your conversations for model training by default, and authorized reviewers can access your chats.

- A DNS-based data exfiltration vulnerability discovered in early 2026 proved that ChatGPT's security sandbox is not airtight.

- Local-first AI tools that process documents on your own device offer a practical alternative for anyone handling confidential data.

The Problem Is Bigger Than You Think

That CISA incident was not a one-off. Across industries, employees are feeding confidential information into ChatGPT at an alarming rate.

According to a Kiteworks study of cybersecurity professionals, only 17% of companies have automated technical controls to prevent employees from uploading sensitive data to public AI tools like ChatGPT. The remaining 83% rely on non-technical measures such as training sessions, warning emails, policy guidelines, or no controls at all.

- Cyberhaven's Q4 2025 research shows sensitive data makes up 34.8% of employee inputs to AI tools, a threefold increase from 10.7% in 2023.

- IBM's 2025 report found that shadow AI breaches cost $4.63 million on average, $670,000 more than breaches not involving shadow AI. Of the 13% of organizations that reported AI-related breaches, 97% lacked proper AI access controls.

- Group-IB found over 225,000 compromised ChatGPT credentials on dark web markets from January to October 2023. The top three infostealer families responsible were LummaC2 (70,484 devices), Raccoon (22,468), and RedLine (15,970), among others.

- 73.8% of workplace ChatGPT accounts are personal, non-corporate accounts invisible to IT, according to Cyberhaven. Their latest 2026 data shows the sensitive data share has risen further to 39.7%.

- Samsung, Apple, Amazon, JPMorgan, Goldman Sachs, Verizon, Deutsche Bank, and Northrop Grumman have all restricted or banned ChatGPT. Verizon's internal memo stated: “ChatGPT is not accessible from our corporate systems, as that can put us at risk of losing control of customer information, source code and more.”

Samsung learned this the hard way in early 2023. Three separate employees leaked confidential data within weeks of the company lifting a previous ChatGPT ban. One uploaded proprietary source code to debug a problem.

Another transcribed a company meeting and pasted the notes into ChatGPT. A third used it to identify defective equipment in a semiconductor line. Samsung had to ban ChatGPT entirely, and an internal survey revealed 65% of their own employees considered AI tools a security risk.

The financial sector panicked too. Bank of America, Citigroup, Goldman Sachs, Wells Fargo, JPMorgan Chase, and Deutsche Bank all restricted or banned employee access to ChatGPT. Apple blocked internal use over fears that product roadmaps could leak. Northrop Grumman, handling defense contracts, followed suit.

How ChatGPT Actually Handles Your Data

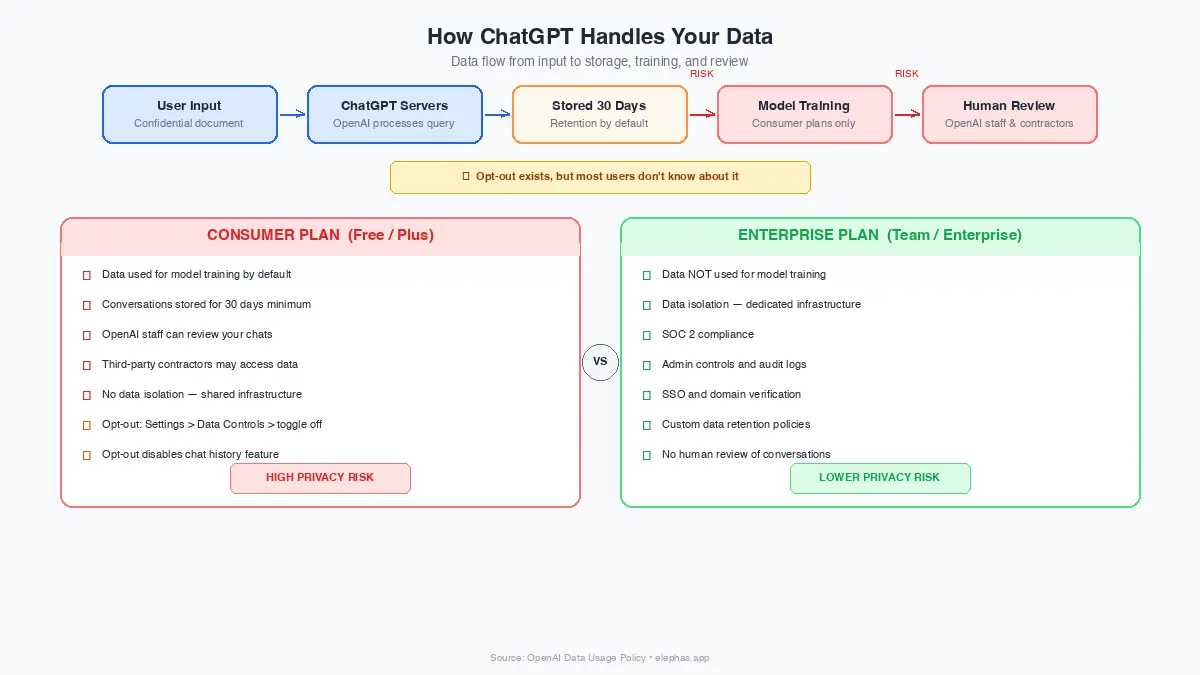

Here is what most people get wrong about ChatGPT's privacy: the risk goes beyond hacking. It is about how the system is designed to work.

On consumer plans (Free, Plus), OpenAI uses your conversations to train future models by default. You can opt out manually through Settings > Data Controls, but most users never touch that toggle. Until you do, every document, every contract snippet, every client detail you type becomes potential training material.

- Consumer ChatGPT (Free/Plus) uses your chats for model training unless you manually opt out in Settings > Data Controls.

- Authorized OpenAI reviewers can access your conversations for safety checks, abuse monitoring, and annotation.

- OpenAI hires outside contractors to review content on most plans, bound by confidentiality agreements but still an additional set of eyes on your data.

- Deleted conversations and Temporary Chats are kept on OpenAI's systems for 30 days before deletion.

- Zero Data Retention (where data is not stored at all) is available through the API and Enterprise agreements, not on consumer plans.

Business and Enterprise accounts get better treatment. OpenAI does not train models on data from ChatGPT Enterprise, Business, Edu, or Healthcare plans. But most employees are not using those plans.

They are using personal accounts, free tiers, or Plus subscriptions where none of those protections apply. And the legal picture adds another layer: OpenAI was under a court order to retain all consumer ChatGPT data indefinitely during litigation with The New York Times.

That order ended in September 2025, but for months every conversation on consumer ChatGPT was preserved with no option to truly delete it.

Here is how the risk breaks down by plan when handling confidential documents:

| Plan | Trains on Data | Human Review | Data Retention | Confidential Doc Risk |

|---|---|---|---|---|

| ChatGPT Free | Yes (default) | Yes | 30 days | HIGH |

| ChatGPT Plus | Yes (default) | Yes | 30 days | HIGH |

| ChatGPT Team | No | Limited | 30 days | MEDIUM |

| ChatGPT Enterprise | No | No (with BAA) | Configurable / ZDR | LOW |

| Elephas (local mode) | No | No | None (on-device) | MINIMAL |

What the Security Community Is Actually Worried About

The real fears in cybersecurity circles go beyond the “your data trains the model” concern. Recent incidents have exposed structural vulnerabilities that most users never hear about.

In early 2026, security researchers found a DNS-based side channel in ChatGPT that could silently siphon conversation data. The attack bypassed OpenAI's safeguard that blocks direct outbound network requests from the code execution environment.

OpenAI patched it on February 20, 2026, and confirmed no evidence of exploitation, but it proved the sandbox is not impenetrable.

- A DNS-based exfiltration vulnerability in ChatGPT was patched February 20, 2026, after responsible disclosure by security researchers.

- In mid-2025, ChatGPT's “Share” feature included a discoverability toggle that made shared conversations indexable by search engines, with over 100,000 chats captured by the Internet Archive before OpenAI removed the feature.

- 16 malicious browser extensions were caught stealing ChatGPT session tokens in early 2026, affecting roughly 900 users before takedown.

- The November 2025 Mixpanel breach exposed names, email addresses, and user identifiers from OpenAI's API platform.

- 225,000+ ChatGPT credentials (from 2023) were sold on dark web markets, harvested from compromised devices, not from hacking OpenAI directly.

The mid-2025 ChatGPT share-link discoverability feature proved particularly troubling. OpenAI's CISO, Dane Stuckey, announced its removal in late July 2025 after it enabled unintended exposure.

Researchers found over 100,000 shared conversations preserved in the Internet Archive's Wayback Machine.

IT administrators on professional forums remain vocal about a related issue: employees using personal ChatGPT accounts for work without oversight, fueling unmonitored shadow AI that evades policy enforcement.

What This Means if You Handle Sensitive Information

For professionals who work with confidential data daily, the implications are direct and immediate. Lawyers face a specific trap: inputting client information into ChatGPT may waive attorney-client privilege.

In February 2026, two federal courts reached opposite conclusions on the same question. In United States v. Heppner (SDNY), Judge Jed Rakoff ruled that documents shared with a consumer AI platform were not privileged.

In Warner v. Gilbarco, a different court held that AI tools are “tools, not persons” and disclosure to them does not waive privilege. Bar associations in multiple states have issued guidance warning attorneys that using public AI tools with client data may constitute an ethical violation, regardless of how courts eventually settle this question.

- Standard ChatGPT is not HIPAA compliant. Entering patient health information on Free or Plus plans is a HIPAA violation that can result in terminations and significant fines.

- OpenAI now offers ChatGPT for Healthcare with HIPAA support, but it requires a separate Business Associate Agreement and operates in a protected environment.

- Lawyers using ChatGPT with client data risk waiving attorney-client privilege, and multiple bar associations have issued warnings about this.

- Financial professionals at regulated firms cannot use consumer ChatGPT without violating internal compliance policies.

- Freelancers and consultants handling client documents face contractual liability if confidential data enters public AI systems.

Healthcare workers face HIPAA violations if they enter patient information into standard ChatGPT. OpenAI now offers ChatGPT for Healthcare that supports HIPAA compliance, but it requires a separate enterprise agreement and a Business Associate Agreement that most individual practitioners will never sign.

The gap between what people think ChatGPT protects and what it actually protects is wide. Consumer plans do not encrypt data end-to-end, do not guarantee data isolation, and do not prevent human reviewers from seeing your conversations.

A Different Approach: Keep Your Data Off the Cloud Entirely

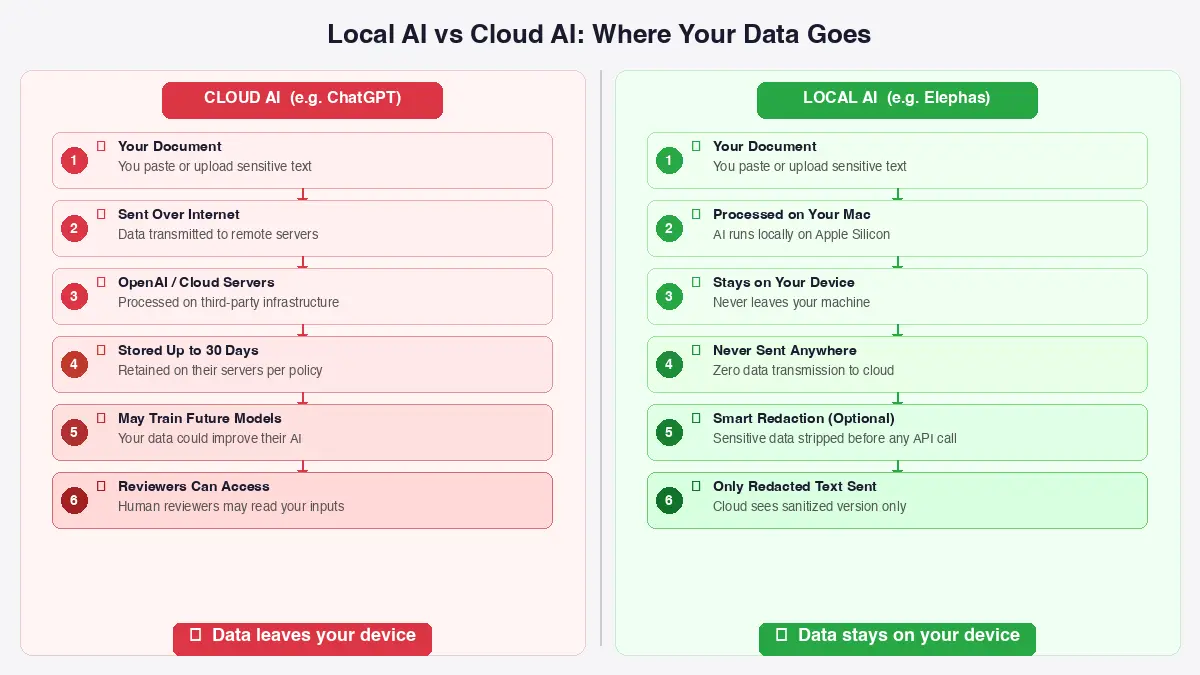

The core problem with ChatGPT for confidential documents is not that OpenAI is careless. It is that the architecture requires your data to leave your device.

Tools like Elephas take the opposite approach. Elephas is a Mac-native AI assistant that processes your documents locally. Your PDFs, contracts, research papers, and client files stay on your machine. Sensitive data is automatically detected and redacted before anything reaches a cloud AI model, your content is never used to train AI models, and nothing passes through a third-party reviewer's screen.

- Elephas processes documents locally on your Mac, and supports a fully offline mode where nothing leaves your device at all.

- You can bring your own API keys for GPT-4o, Claude, Gemini, Groq, or any other provider and use them through Elephas on any paid plan. You get the same output quality as using those models directly, but with a smart redaction layer sitting between you and the API that strips sensitive information before it ever leaves your Mac.

- The smart redaction feature (available across all plans) automatically detects and removes credit card numbers, email addresses, client names, and medical records from your prompts.

The AI model only sees the redacted version, so you get top-tier results without sacrificing privacy.

- Super Brain lets you build a searchable AI knowledge base from your own documents across 20+ file formats without uploading anything to external servers.

- Elephas starts at $9.99/month (standard plan) and $19.99 (Professional plan) with smart redaction included. Pro Plus at $39.99/month adds unlimited tokens and advanced features.

The point is not to give up the quality of GPT-4o or Claude for the sake of privacy. It is to use those exact same models, at the same quality, while keeping your sensitive data off their servers entirely. That is what local-first architecture with smart redaction actually solves.

The Privacy-First Future of AI

The question is not whether AI tools are useful for working with documents. They clearly are. The question is whether the default architecture, sending everything to someone else's server, is the right one for confidential work.

- Google's Gemma 4 models now run fully offline on edge devices, proving local AI is production-ready.

- Apple is building on-device AI into its ecosystem, signaling that the industry sees the future in local processing.

- The safest data is data that never leaves your machine, and the tools to make that practical already exist like Elephas.

- Audit what your team is actually pasting into ChatGPT, and disable model training in consumer account settings at minimum.

For anyone handling confidential documents, the practical next step is clear: stop trusting the default settings, start asking where your data actually goes, and consider whether a local-first AI tool might solve the productivity problem without creating a security one.