Apple's Siri AI Overhaul: Standalone App, Third-Party AI, and the iOS 27 Reboot

Apple is preparing the biggest overhaul of Siri since the voice assistant first launched in 2011. Two reports from Bloomberg this week revealed that iOS 27 will bring a standalone Siri app, a new interface built into the Dynamic Island, and an Extensions system that opens Siri to rival AI services like Google Gemini, Anthropic's Claude, and xAI's Grok.

The company plans to unveil these changes on June 8, 2026, at its Worldwide Developers Conference. After more than a year of delays and public setbacks with Apple Intelligence, this is Apple's attempt to reposition itself in the AI race.

June 8

WWDC 2026 reveal date

~$1B/yr

Google-Apple AI partnership value

4+

Third-party AI services in testing

2+ Years

Siri AI promises delayed

Executive Summary

- Apple is building a dedicated Siri app for iPhone, iPad, and Mac with a full chat interface, conversation history, and support for voice and text input.

- A new Extensions system will allow third-party AI services like Claude, Gemini, and Grok to integrate directly into Siri, ending ChatGPT's exclusive access.

- Google's Gemini models now power the core of Siri's AI capabilities through a partnership reportedly worth around $1 billion per year, with a total value that could reach $5 billion over the contract's life.

- Apple previously explored using Anthropic's Claude as Siri's backbone, but the deal collapsed over pricing that would have doubled costs annually.

- Most of the personalized Siri features, first announced at WWDC 2024, are not expected to fully launch until fall 2026 with iOS 27.

Siri Gets Its Own App for the First Time

For nearly 15 years, Siri has existed as a voice overlay on Apple devices. You press a button, ask a question, and get a response. There has never been a place to go back and review what you asked or continue a previous conversation. That changes with iOS 27.

Apple is building a dedicated Siri app for iPhone, iPad, and Mac. Internally code-named Campos, the app brings Siri closer to what users already expect from tools like ChatGPT or Google Gemini. The main screen shows prior conversations in a list or grid layout, and users can pin favorites, search through past interactions, and start new conversations with a plus button.

- The conversation view uses a chat bubble format similar to Apple's Messages app, with a text entry field at the bottom

- Users can toggle between voice and text input within the same conversation

- Attachments like documents and photos can be uploaded for analysis, matching what modern AI chatbots already offer

- When starting a new conversation, Siri suggests prompts based on what you've asked before

This is Apple's clearest admission that the old Siri model, where every interaction starts from scratch, no longer works. Users have grown accustomed to threaded conversations with AI tools, and Apple is adapting to meet that expectation.

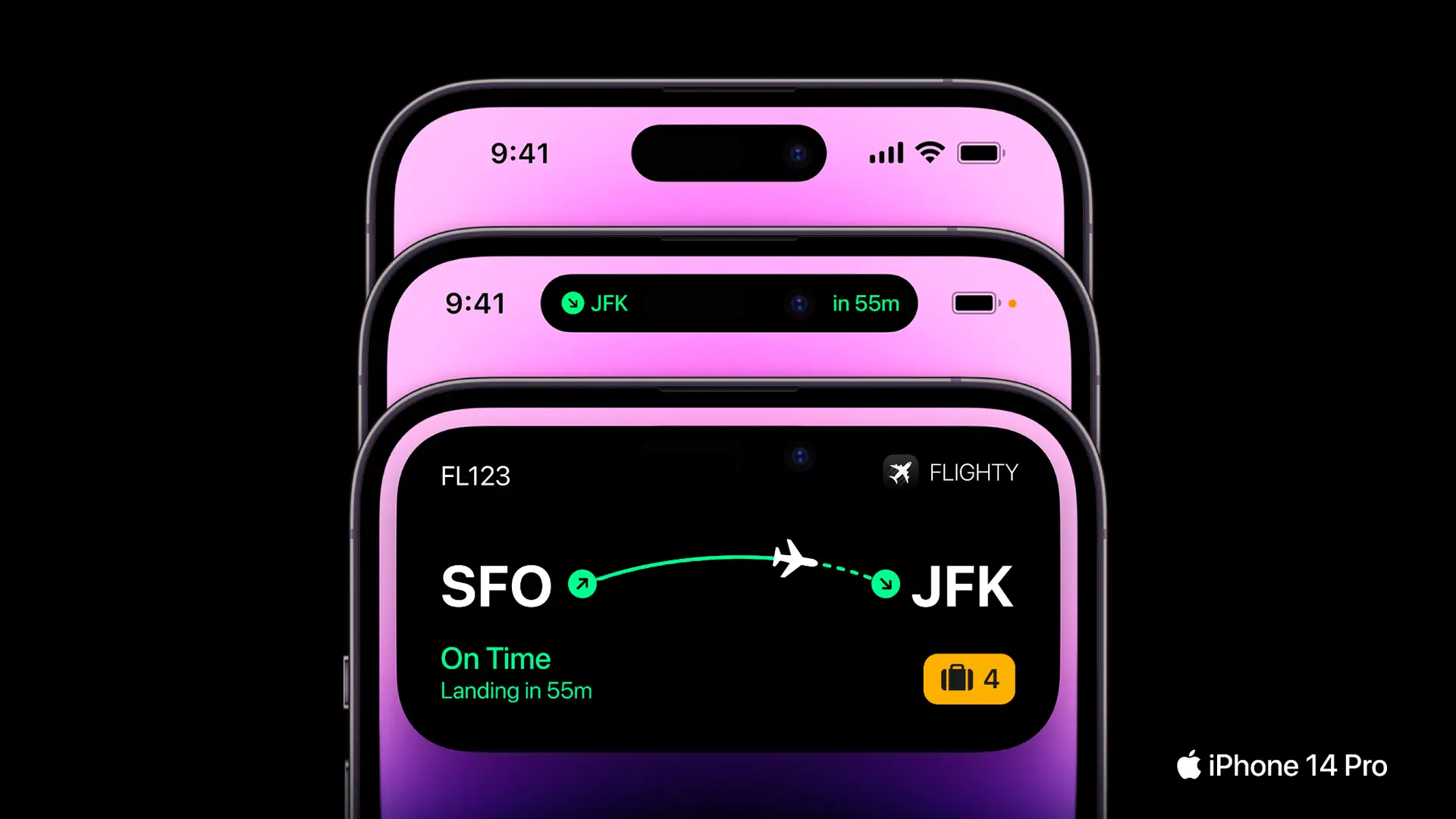

A Redesigned Interface Inside the Dynamic Island

Beyond the standalone app, Apple is also changing how Siri appears when you activate it the traditional way, through the power button or a voice command.

The current glowing edge effect introduced in iOS 18 is being replaced. In the new design being tested, Siri activates inside the Dynamic Island at the top of the screen and prompts the user to “Search or Ask.” While processing a request, a pill-shaped “Searching” label appears alongside a glowing Siri icon. Once results are ready, the interface expands into a larger translucent panel.

- Users can pull this panel down further to begin a back-and-forth conversation

- Apple is testing Siri as a replacement for Spotlight, its current on-device search system, combining local file search and AI queries into one interface

- A systemwide “Ask Siri” toggle will appear in menus across built-in apps, letting users send highlighted text or selected content directly into a Siri conversation

- A “Write with Siri” option will appear at the top of the keyboard, giving quicker access to Apple's Writing Tools feature, which has been difficult to find in current iOS versions

Apple is also building in a feature called Personal Context, which was originally promised in 2024 but never shipped. It allows Siri to read your messages, notes, emails, and calendar to give more relevant answers. The updated Siri will also provide web-sourced responses with summaries, bullet points, and images, along with daily news summaries pulled from Apple News content.

The final design is still being tested internally, and Apple's human interface team is evaluating multiple options before the WWDC reveal.

Opening Siri to Rival AI Services

The most significant strategic shift in these reports is Apple's decision to open Siri to competing AI services.

Since Apple Intelligence launched with iOS 18, ChatGPT has been the only third-party AI service integrated into Siri. Under an arrangement with OpenAI, users could route certain queries to ChatGPT when Siri could not answer them. That exclusive arrangement ends with iOS 27.

Apple is building an Extensions system that will allow any AI chatbot app installed from the App Store to work inside Siri. Test versions of iOS 27 already include a toggle menu in Settings under Apple Intelligence and Siri, where users can enable or disable individual AI services.

- Confirmed providers in testing include OpenAI's ChatGPT, Google's Gemini, Anthropic's Claude, and xAI's Grok has also been mentioned as a likely candidate

- Other potential candidates include Perplexity, Amazon's Alexa, Meta AI, and Microsoft's Copilot, though it is unclear whether all will be approved

- An internal description in test builds reads: “Extensions allow agents from installed apps to work with Siri, the Siri app and other features on your devices”

- Users will be directed to a new App Store section from the settings menu to download additional AI services

- Instead of queries defaulting to ChatGPT, users will specify which AI service to use for each request

This move eliminates the need for Apple to negotiate individual integration deals like the one it struck with OpenAI. It also creates a new revenue opportunity. When users sign up for premium tiers of these AI services through the App Store, Apple takes a percentage of the subscription.

The announcement briefly affected financial markets. Google's stock dropped 3.4% to close at $280.92 on the day the news broke, while Apple shares stayed essentially flat at $252.89.

Google Gemini Powers the New Siri

While third-party AI services will be available as options, the core engine running Siri's AI capabilities comes from Google.

Apple and Google announced a multi-year partnership in January 2026. Under this arrangement, the next generation of Apple Foundation Models will be trained and distilled from Google's Gemini models. Apple uses Gemini as a foundation to build its own smaller, on-device models through a process called model distillation, rather than running Gemini directly on iPhones.

Gemini specifically powers the “personalized Siri,” the version that can access personal data, understand on-screen content, and take actions across apps.

- The financial terms have not been officially disclosed, though Bloomberg reported the deal is worth roughly $1 billion per year, with some estimates placing the total contract value at up to $5 billion

- Tim Cook confirmed the arrangement during an earnings call, saying: “You should think of what is going to power the personalized version of Siri as a collaboration with Google”

- Gemini models run on Apple's Private Cloud Compute servers, not Google's infrastructure, preserving Apple's privacy architecture

- The partnership scope extends beyond Siri into Safari and Spotlight Search

- On-device features like Writing Tools, Image Playground, and Notification Summaries have not been confirmed to use Gemini, and they likely continue running on Apple's own models

It is worth noting that this partnership is separate from the Extensions system. The Gemini partnership relates to the underlying technology powering Siri. The Extensions system lets users access the actual Gemini service (or any other chatbot) through Siri's interface.

Why Apple Had to Start Over

This overhaul did not happen by choice. It is the result of more than a year of failed promises and internal dysfunction around Apple Intelligence.

Apple first announced its AI strategy at WWDC in June 2024. The presentation included sweeping promises about a personalized Siri that could read your email, understand what was on your screen, and take actions across apps.

When Apple Intelligence shipped that fall, it delivered basic features like Writing Tools, Image Playground, and Notification Summaries. The personalized Siri features were nowhere to be found.

- The personalized Siri was initially delayed to spring 2025, then pushed to March 2026, and is now expected sometime in fall 2026

- Apple had to disable its AI-generated summaries after the system produced inaccurate and fabricated headlines for outlets like The New York Times, Washington Post and BBC

- WWDC 2025 came and went with no meaningful Siri improvements announced

- John Giannandrea, Apple's head of AI and machine learning, stepped down from his role in December 2025 and transitioned to an advisory position before retiring. He was described internally as not aggressive enough in pushing for the resources the AI team needed

- A Bloomberg investigation published in May 2025 found weak communication between AI teams and marketing, budget misalignment, and a broken hybrid architecture that stitched legacy machine learning to generative AI systems

One detail that captures the internal shift: Apple software engineering head Craig Federighi, who now oversees the company's AI efforts, reportedly had a turning point after personally using ChatGPT. That experience helped accelerate Apple's push to rebuild Siri from the ground up, fully based on large language models rather than the patched-together system it was running before.

The Anthropic Deal That Collapsed

Before settling on Google, Apple seriously considered Anthropic's Claude as the AI backbone for the new Siri.

Apple ran custom versions of Claude on its own servers during an evaluation period. The technology worked. The problem was money. Anthropic's proposed contract called for costs to double every year for three consecutive years. Apple decided that pricing structure was not sustainable and walked away from the deal.

- Bloomberg's Mark Gurman first reported the deal's collapse, with AppleInsider and other outlets covering the story in January 2026, citing the steep cost escalation as the reason

- Apple chose Google's Gemini as an alternative, which came with what appears to be a more predictable financial arrangement

- In a twist, Claude will still be available inside Siri through the new Extensions system, just as one option among several rather than the engine running everything underneath

The failed Anthropic deal shaped the strategy Apple ultimately adopted. Rather than depending on a single external AI provider, Apple built a system where Gemini powers the core and multiple services compete as user-facing options.

What Users Should Expect

Apple plans to reveal these changes at WWDC on June 8, 2026, with a full release expected alongside iOS 27 in the fall.

Several important caveats apply. Many people involved in the project believe the full set of personalized Siri features, including personal data access and on-screen awareness, will not be ready until fall at the earliest.

Features could still change or get delayed before the announcement. The latest internal test versions of iOS 27 include these features, but Apple's track record with AI delivery timelines has been inconsistent.

- According to reports from multiple tech outlets, the new Siri features may require devices with at least 12GB of RAM. If accurate, older iPhones with 8GB could receive a scaled-down version that relies more heavily on cloud processing. Apple has not officially confirmed this requirement

- Apple has not confirmed any of these plans publicly, and a company spokesperson declined to comment on the Bloomberg reports

- The Extensions system requires AI chatbot developers to add support through their App Store apps, so availability at launch depends on how quickly those companies build the integration

- Apple's WWDC website currently promises details on “AI advancements” but offers no specifics

For users, the practical impact comes down to two things. First, Siri will finally work more like the AI assistants people have been using for the past two years, with real conversations, memory of past interactions, and the ability to process documents and images.

Second, users will no longer be locked into a single AI provider. If you prefer Claude over ChatGPT, or Gemini over both, you can set that as your default and use it through the same Siri interface you already know.

Whether Apple can actually deliver all of this on schedule remains the open question. The company promised most of these capabilities two years ago and has yet to ship them. WWDC in June will show how much has changed.

Want AI That Works Without the Cloud?

While Apple figures out its AI strategy, Elephas already runs locally on your Mac — no cloud processing, no data leaving your device, no waiting for iOS 27.

Try Elephas Free →