Starlink Updated Its Privacy Policy on January 15. If You Don't Opt Out, Your Data Trains AI.

On January 15, 2026, SpaceX changed the Starlink Global Privacy Policy. The change appears as additional language in the policy document on the Starlink website, and it gives SpaceX permission to use your Starlink data, including audio, video, and the contents of shared files, to train its AI models.

The same clause permits sharing that data with “service providers and third-party collaborators” (xAI being the obvious recipient given the ongoing merger talks). Unless you opt out. Most users won't know there's anything to opt out of.

So what does the new policy actually permit, why does the opt-out structure matter, and what should a privacy-conscious professional do while the SpaceX-xAI merger talks are underway? Let's look at it.

Jan 15

Date of the silent policy change

9M+

Starlink users now in scope

$230B

xAI valuation set to inherit the data

1-5%

Typical opt-out adoption rate

Executive Summary

- SpaceX updated the Starlink Global Privacy Policy on January 15, 2026 to permit customer data to be used for AI training

- The policy added language allowing data to be shared with “service providers and third-party collaborators” (a phrase undefined and broad)

- The covered data includes communication data: audio, visual content, shared files, and inferences SpaceX makes about you

- The change is opt-out, not opt-in. Decades of academic research on default settings suggest most users will not find or use the toggle

- The change comes ahead of in-progress SpaceX-xAI merger talks, which if closed would route Starlink data into xAI's Grok training pipeline

- Many Starlink users (rural, maritime, remote) have no alternative ISP, so opting out is their only protection

- The structural alternative for sensitive AI work is Elephas, the privacy-friendly AI knowledge assistant for macOS. It runs built-in local LLM models on your Mac and uses Smart Redaction to mask sensitive data before any cloud call, so AI work stays on your device regardless of which ISP carries it

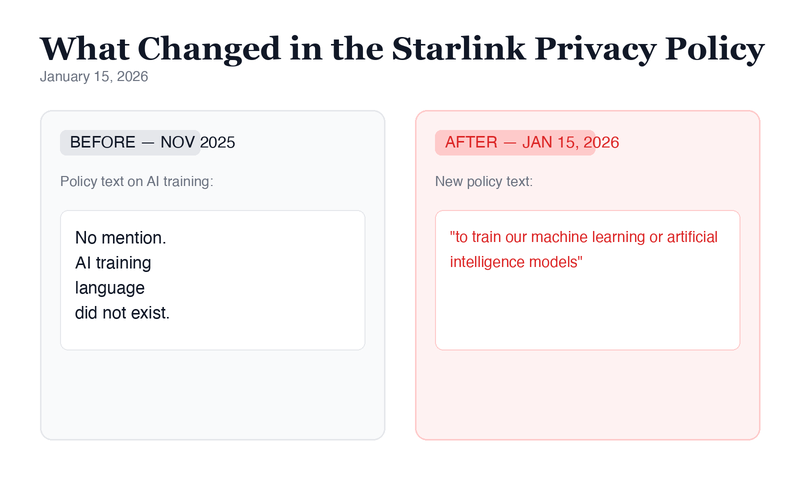

What Actually Changed in the Policy

The change is small in word count and large in implication. An archived November 2025 version of the policy, reviewed by Reuters, contained no language about AI training. The standard ISP data collection terms were the only mention of how customer information would be used.

The January 15, 2026 version adds new language. Starlink may now use customer data “to train our machine learning or artificial intelligence models” and may share that data with “service providers and third-party collaborators.”

The phrase “third-party collaborators” is not defined anywhere in the policy. That is the loophole. xAI is the obvious recipient given the ongoing merger talks, but the language is broad enough to cover any commercial partner SpaceX chooses now or in the future.

The sentence that changed everything reads: “to train our machine learning or artificial intelligence models.” This phrase did not appear in the most recent prior version (the November 2025 archive reviewed by Reuters).

An opt-out toggle exists in account settings, though users need to actively find it. SpaceX did not respond to a request for comment from Reuters about the change.

What Data Starlink Actually Holds on You

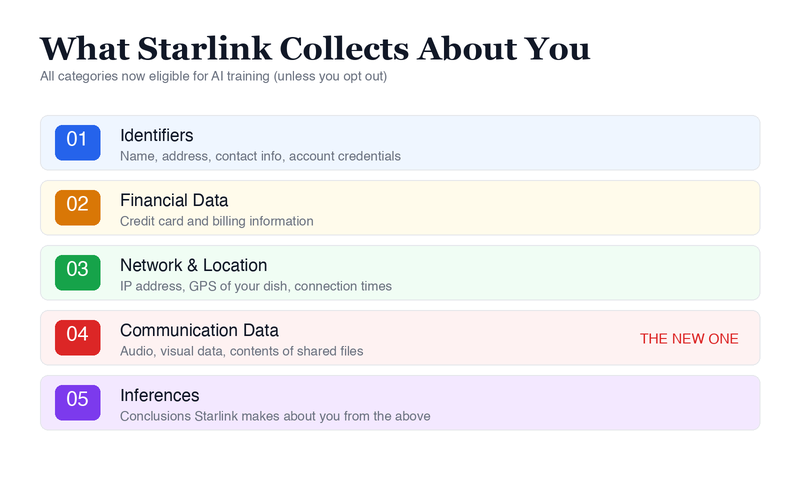

The phrase “customer data” sounds abstract until you read what Starlink actually collects. The Global Privacy Policy lists several distinct categories, each of which is now eligible for AI training under the January 15 change.

The first set of categories is familiar to anyone who has used an internet provider: contact information like name and address, financial data like credit card and billing information, location information, and your IP address. These are the kinds of data points most ISPs collect.

The category that matters most is what Starlink calls “communication data.” The policy describes this as audio and visual information, the contents of shared files, and “inferences we may make from other personal information we collect.” This is not metadata. SpaceX is reserving the right to use the actual content of what flows through the dish.

Anyone whose work involves confidential information, and whose connectivity has touched a Starlink dish since January 15, has a question to answer about exposure. The policy does not specify exactly what subset of communication data SpaceX will pull into AI training. The permission is broad. The opt-out is narrow.

By profession, the people most directly affected include:

- Lawyers with privileged client communications routed over Starlink in rural offices or while traveling

- Journalists with source communications and field reporting from regions where Starlink is the only viable connection

- Clinicians and therapists running telehealth sessions in rural practices with HIPAA exposure

- Financial advisors handling client portfolio discussions and document transfers

- Founders and executives doing strategic work over connectivity that runs through a Starlink dish

For any of these professionals, the wider question of how to keep client data safe when using AI tools is now an infrastructure question as well as an application question.

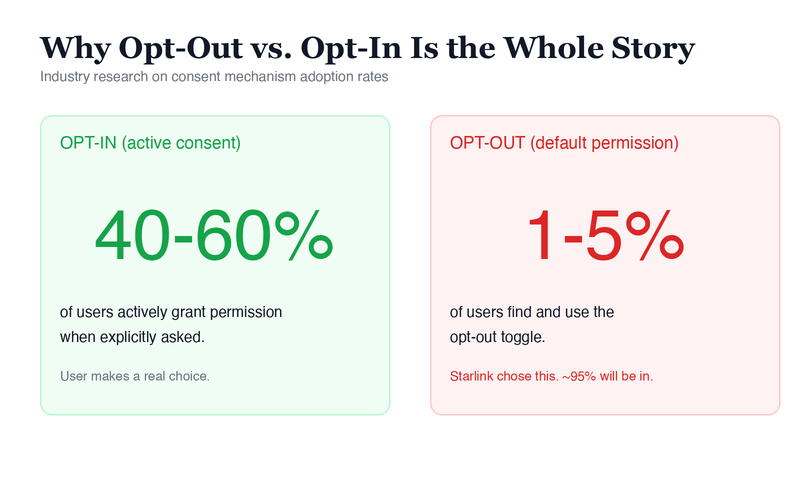

The Opt-Out Math Is the Whole Story

The choice between opt-in and opt-out is the entire ballgame. Academic research on default settings, beginning with the foundational Johnson, Bellman, and Lohse paper published in Marketing Letters in 2002 and reproduced repeatedly since, consistently shows that opt-out structures have far lower user engagement than opt-in defaults.

Opt-out adoption typically lands in the low single digits while opt-in defaults can reach the forty to sixty percent range. By choosing opt-out, SpaceX has effectively given itself permission for the vast majority of its nine million users without those users actively consenting to anything.

The update appeared in the policy document on the Starlink website. Privacy law in most jurisdictions requires “reasonable notice” for material changes to data processing terms. Whether the on-website change alone clears that bar will likely be tested by regulators rather than answered by SpaceX itself.

There is also a category of users for whom opting out is the only realistic protection. Starlink is the only viable connectivity in many rural areas, on boats, and at remote sites where no other ISP reaches.

“Switch ISPs” is not a real option for those users. They cannot vote with their wallet. Their only choice is to find the toggle and disable it, which assumes they know it exists.

Anupam Chander, a technology law professor at Georgetown University, told Reuters: “It certainly raises my eyebrow and would make me concerned if I was a Starlink user. Often there's perfectly legitimate uses of your data, but it doesn't have a clear limit to what kind of uses it will be put to.”

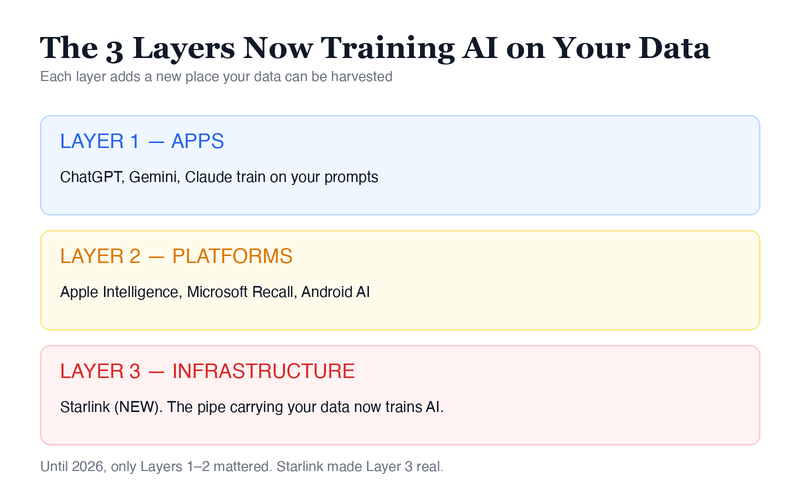

The Bigger Pattern: Your Infrastructure Is Now an AI Training Pipeline

The Starlink change is not a standalone story. It is the third layer of a pattern that has been building for two years. The first layer is the apps. ChatGPT, Gemini, and Claude all train on user inputs by default, with varying opt-out mechanisms. Most professionals already treat cloud AI accordingly when handling confidential documents.

The second layer is the platforms. Apple Intelligence, Microsoft Recall, and Google's Gemini integration in Android now process user data, on-device or in the cloud, to power AI features. This layer is less obvious to most users because the AI is woven into the operating system rather than a discrete app.

The third layer is new with Starlink. The pipe carrying your data to the internet now reserves the right to train AI on what flows through it. This is the first major commercial ISP we are aware of to add this language to its privacy policy. It is reasonable to expect other infrastructure providers to watch the precedent and follow.

The Vertical Integration Angle

xAI (the LLM and Grok chatbot), X (the social platform), and Starlink (the ISP) all sit under one ownership umbrella. The reported SpaceX-xAI merger talks are the missing context that makes the policy change make sense. The change functions as pre-merger plumbing, preparing Starlink's nine-million-user data pool to flow into xAI's training pipeline once the deal closes.

For a professional who has spent the last two years configuring AI tools to respect privacy, this is the moment the strategy needs an upgrade. Locking down apps is no longer enough if the platform underneath and the infrastructure underneath that are both pulling data into AI training. The only safe place to do sensitive work is on your own device, with your own model, in your own control.

What To Do Before the Merger Closes

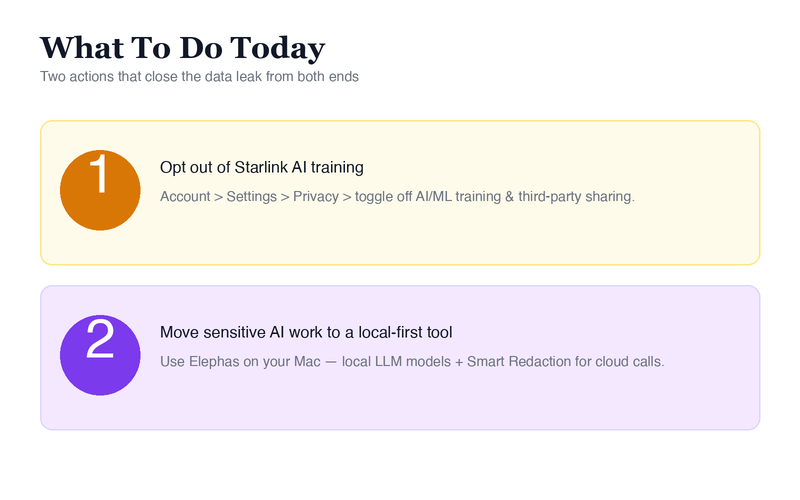

There are two things to do, and they close the leak from both ends.

The first action is the Starlink-side fix. Sign in to your Starlink account and look for the AI/ML training and third-party data sharing options in your account's privacy settings. The exact location of the toggle may vary by region and account type, so consult starlink.com for the current path.

If you handle privileged or regulated communications, also audit which client, patient, source, or business comms have been routed over your dish since January 15, 2026. Depending on your jurisdiction (GDPR, HIPAA, CCPA, and similar), you may have a notification obligation to those parties.

The second action is the structural fix. The ISP-level data exposure is one layer of the problem. The other layer is what happens when you use AI tools that send your work to cloud servers like OpenAI, Anthropic, and Google, even on a connection that has nothing to do with Starlink. That is where Elephas fits.

Elephas is the privacy-friendly AI knowledge assistant for macOS. It provides built-in local LLM models, which means your prompts and your documents process on your Mac, not on a remote server. There is no third-party install required, since the local models ship with the app.

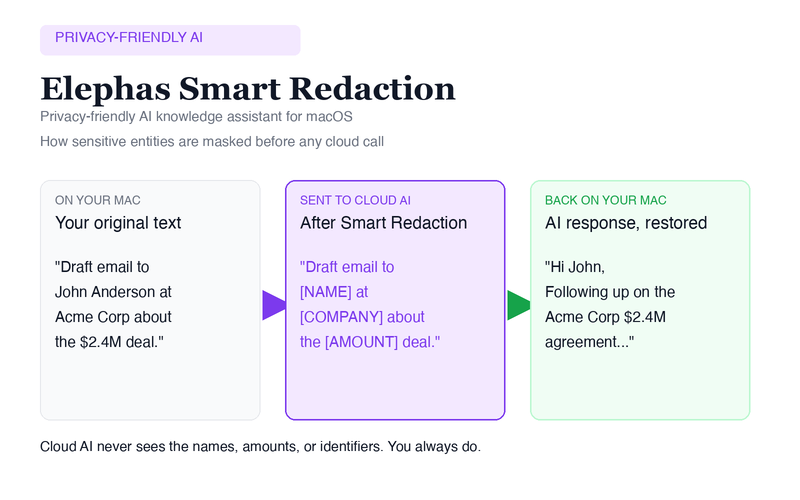

For tasks where you do want a frontier cloud model like GPT-5.4 or Claude Opus, Elephas's Smart Redaction feature sits in front of the cloud call. Smart Redaction automatically detects and masks sensitive information like names, addresses, and financial details from your prompts before sending them to cloud AI, then restores them in the response on your device, so private data never leaves your Mac.

The cloud provider sees the structure of your request but never the sensitive content. The response comes back, gets restored on your Mac, and you see the full answer in plain text.

Elephas works across your entire Mac, including Mail, Safari, Pages, Notion, anywhere you can select text. For lawyers, clinicians, journalists, financial advisors, and any professional whose work touches confidential information, the AI you use for drafting, summarizing, and researching never becomes another data pipeline pointed at someone else's training set.

The connection underneath does not matter. The data does not leave the device unless you choose, and even then only after redaction. The fuller case for private AI versus public AI for work extends the same logic well beyond the Starlink moment.

What To Do Today

The Starlink change is small in word count and large in implication. One sentence in a privacy policy gave SpaceX permission to use the communication data of nine million users for AI training. If the merger closes, that data flows into xAI's training pipeline.

Most of those users will never see the update. Most will never find the toggle. The opt-out math means the default outcome is that nearly all of them are in.

This is not the last change of its kind. If the SpaceX-xAI merger closes, and as other infrastructure providers watch the precedent, expect this clause to appear in more policies, with less fanfare.

The pattern is set: collection happens at every layer of the stack, consent is opt-out where possible, and the data flows toward whichever AI model the parent company is building.

Two things to do today, in order:

- Opt out of Starlink's AI training in your account settings. Privacy section, AI/ML toggle off, third-party sharing off.

- Move the AI work you do at your desk to a local-first tool. Run it on something that keeps your data on your device. Elephas is built for exactly that.

The cheapest defense against future privacy shocks is making sure your data was never collected in the first place. The infrastructure layer of AI privacy and security is no longer hypothetical. It is shipping in the policies of the companies that already have your data.

Related Resources

Explore all AI Privacy & Security resourcesClaude Mythos Release: What It Means for Your Private Files

8 min readnewsVercel Got Hacked: The April 2026 Breach Tied to a Context AI Misstep

10 min readnewsLovable Hacked: API Flaw Exposes Thousands of Projects on the Lovable AI App Builder

13 min readnewsClaude Mythos Preview: First AI to Complete a 32-Step Autonomous Cyber Attack (AISI 2026)

12 min readSources

- Yahoo Finance / Reuters: Musk's Starlink updates privacy policy to allow consumer data to train AI (Jan 31, 2026)

- Reuters: Original wire report (Jan 30, 2026)

- Starlink Global Privacy Policy (current version)

- Johnson, Bellman, and Lohse: “Defaults, Framing and Privacy: Why Opting In-Opting Out” (Marketing Letters, 2002)

- Georgetown Law: Anupam Chander, Technology Law Professor