Can You Upload Contracts to AI? Privacy Risks, Vendor Workflow, and Safer Alternatives for AI Contract Management

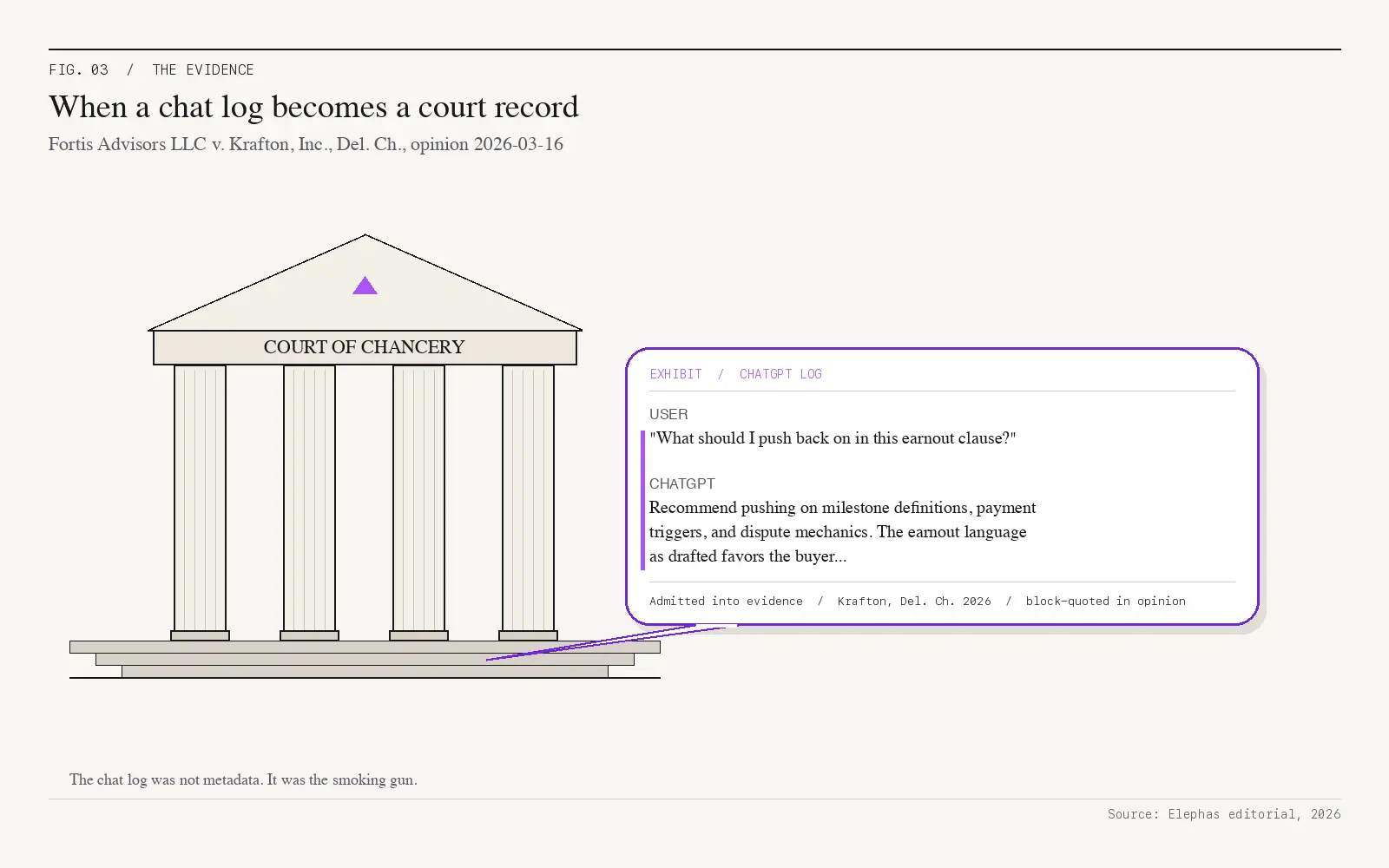

In March 2026, a Delaware judge quoted a CEO's ChatGPT chats word-for-word inside a $250 million court ruling, noting he “followed most of [the AI Tool]'s recommendations.” Three weeks earlier, a federal court in New York ruled that ChatGPT-style chats are no longer protected by attorney-client privilege, because the AI company is treated as an outside party that keeps everything you type.

Two rulings, two months apart, reframed the contract-and-AI question for every lawyer, paralegal, and contract manager on a Mac. This article walks through what actually happens when a contract goes into a public AI tool, why the vendor agreements quietly permit it, and the on-device alternative that keeps the document on your laptop instead of a vendor's training pipeline.

11%

Of pasted data is confidential (Cyberhaven, 1.6M workers)

20M

ChatGPT logs OpenAI ordered to hand over to NYT plaintiffs

$250M

Krafton earnout — ChatGPT logs admitted as evidence

3 yrs

Google retains human-reviewed chats after you delete them

Executive Summary

- Pasting into a consumer chat tab can breach the contract's own confidentiality clause — the AI vendor is a third party under most NDAs and MSAs

- A February 2026 federal court ruling (United States v. Heppner) held that ChatGPT-style chats are not protected by attorney–client privilege — the AI company is treated as an outside party that keeps everything you type

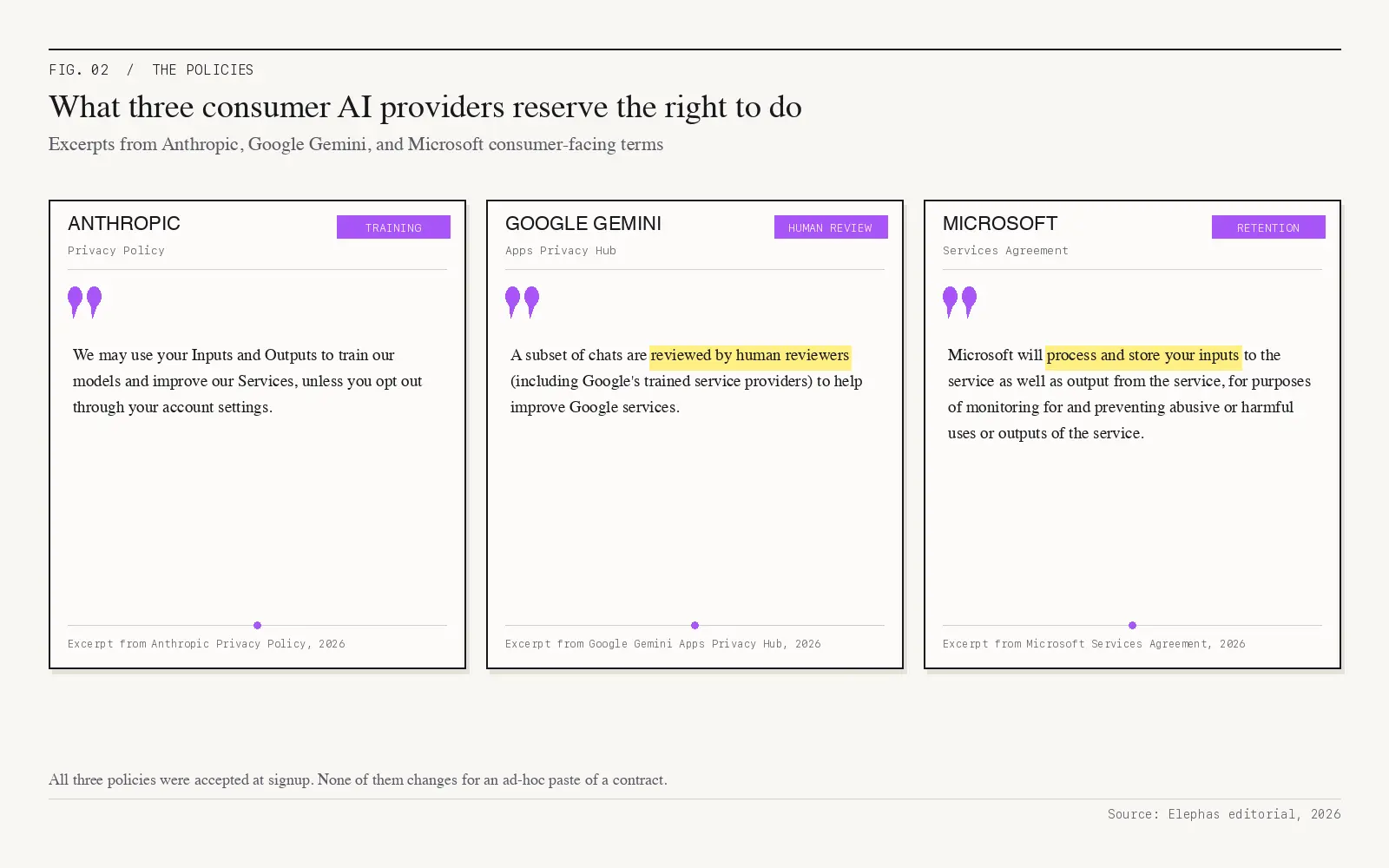

- Anthropic, Google, and Microsoft's terms of service all permit using your inputs for training, human review, or compliance monitoring

- “Delete” doesn't mean deleted — OpenAI was ordered to produce 20 million logs, including chats users had removed

- On-device AI is the only verifiably private path: the contract never transmits to any cloud, so there is no third party to subpoena

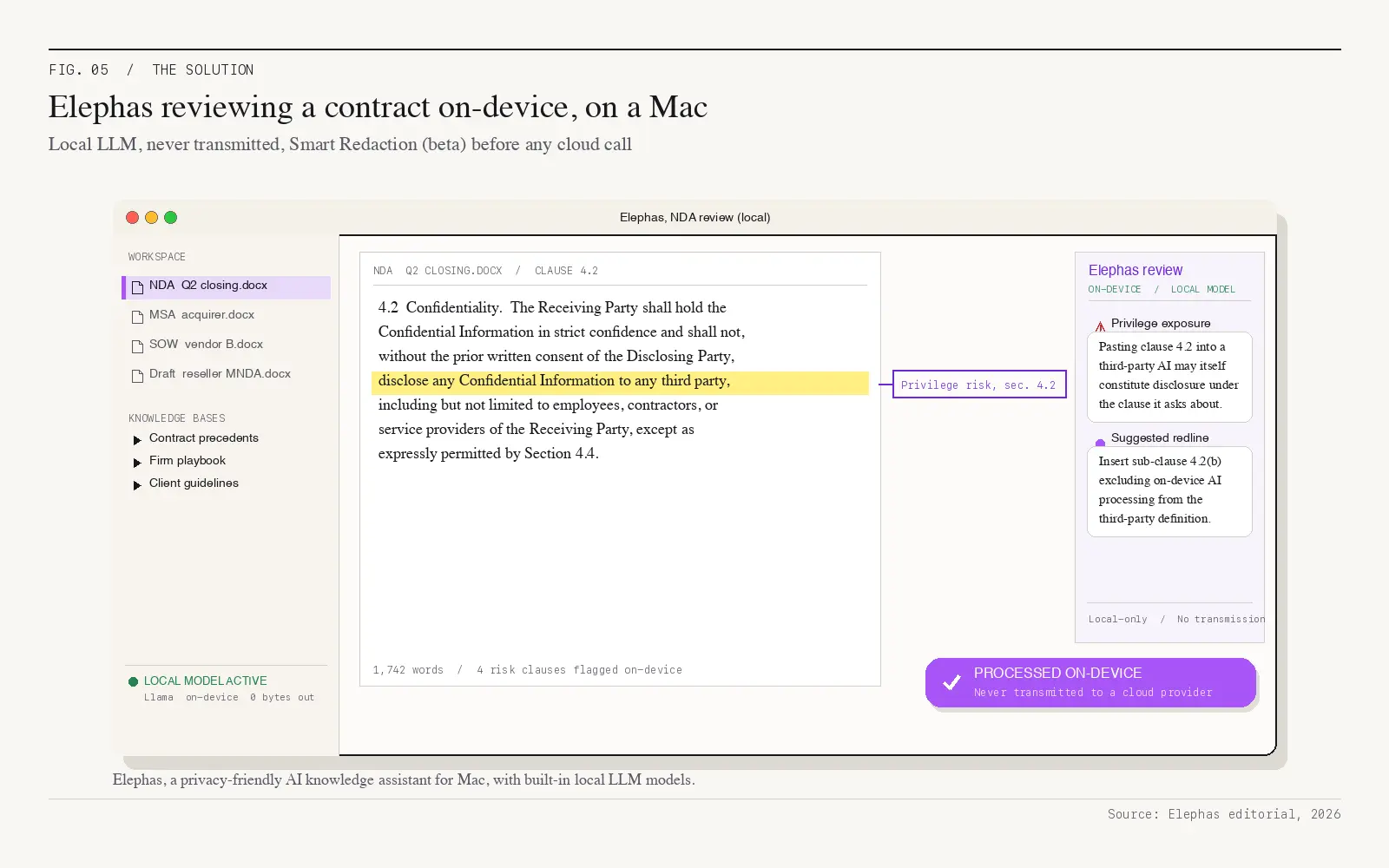

- Elephas, a privacy-friendly AI knowledge assistant for Mac, provides built-in local LLM models for fully on-device contract review and Smart Redaction (beta) for the cloud calls you can't avoid

What Happens When You Upload a Contract to ChatGPT or Another AI Tool

In a March 2026 Delaware court ruling on a $250 million payment dispute (Fortis Advisors v. Krafton), the judge quoted the CEO's ChatGPT chats word-for-word inside the official ruling, noting he “followed most of [the AI Tool]'s recommendations.” Late-night chat logs became courtroom evidence.

That paste created four simultaneous failure modes, any one enough to end the safety conversation.

Contract breach by upload

Most NDAs, MSAs, and MNDAs name third party disclosure as the breach trigger, and the AI vendor is a third party, so the paste is the disclosure.

Privilege waiver

In a February 2026 federal court ruling (United States v. Heppner), a New York judge held that ChatGPT-style chats are not protected by attorney-client privilege or by the protection that normally covers a lawyer's working notes — the AI company is treated as an outside party that keeps everything you type. The ruling has dominated legal news since February.

Training-data ingestion

The provider's terms of service treat your inputs as content the model can train on by default. Cyberhaven research, analysing usage from 1.6 million knowledge workers, found that 11% of all data employees paste into the consumer chat tab is confidential, and the average organization leaks sensitive data hundreds of times per week.

Data spillage between sessions

A 2023 bug in the open-source redis-py library caused the consumer assistant to mis-route cached user data, exposing payment details for roughly 1.2% of paid subscribers.

“Delete” doesn't mean deleted either. In late 2025, OpenAI was ordered to produce 20 million logs to plaintiffs in the New York Times copyright lawsuit, including chats users had already removed.

None of the four failure modes is an accident of the technology. Each is written into the policies you accepted on signup, and AI use in legal practice runs ahead of the privacy and security review teams still need.

Why Consumer AI Vendor Contracts Treat Your Confidentiality as Training Data

None of this is an oversight. The vendor agreements you clicked through during onboarding are short, broad, and written for the provider, not for you. Read them three in a row and the same shape keeps showing up.

- Anthropic: “We may use your Inputs and Outputs to train our models.”

- Google Gemini Apps Privacy Hub: “A subset of chats are reviewed by human reviewers (including Google's trained service providers) to help improve Google services.”

- Microsoft consumer Copilot (Services Agreement): “Microsoft will process and store your inputs to the service as well as output from the service, for purposes of monitoring for and preventing abusive or harmful uses or outputs of the service.”

The “30-day delete” promise isn't really delete. Anthropic says deleted conversations are “automatically deleted from our back-end within 30 days.” Google adds that human-reviewed chats are “retained for up to three years,” even after you delete your activity.

A court order overrides both. Anthropic's reserved right to “disclose personal data to governmental regulatory authorities as required by law” is the clause that made the Heppner ruling possible.

Three frameworks treat the paste as third party disclosure across multiple jurisdictions.

- American Bar Association ethics rule (Model Rule 1.6(c)): lawyers must “make reasonable efforts to prevent the inadvertent or unauthorized disclosure of, or unauthorized access to, information relating to the representation of a client.”

- ABA Formal Opinion 512 (the bar association's first official guidance on generative AI): tools like ChatGPT “raise the risk that information relating to one client's representation may be disclosed improperly,” and the opinion requires the client's explicit informed consent before you paste their data — the standard boilerplate buried in an engagement letter is not enough.

- EU and California data protection laws (GDPR Article 28 and CCPA): both require a formal data processing agreement with any service handling personal data, and the free ChatGPT or Claude chat tab does not offer one.

For more on the broader pattern, see AI privacy risks most users don't realize. Once the rules already classify the paste as disclosure, one consequence dominates: privilege.

AI Contract Review and the Hidden Audit Trail: Who Reads It After You Upload

Most lawyers' first instinct is to ask, “is the data safe?” After the Heppner and Krafton rulings, the better question is, “who's on the receiving end, and what can a court later force them to hand over?” Once a cloud AI service is the recipient, the chat becomes a record, and records get pulled into evidence.

In plain language, the Heppner ruling reframes the paste itself. When a lawyer or client pastes legal work into a consumer AI, those messages are no longer protected by attorney-client privilege or the protection that normally covers a lawyer's working notes, because the AI company is treated as an outside party that keeps your data and may have to hand it over. The New York State Bar's takeaway was five words: “Loose AI prompts sink ships.” AI contract review marketed as an internal legal tool quietly hands an outside system the entire audit trail.

There is a second problem, captured by Above the Law: “There is no commitment to eliminate metadata or logging information, and there is no audit feature should you need to establish confidentiality.” Once it is uploaded, the chat is a record you cannot later prove was private.

Three roles see the impact in different ways.

- In-house counsel: every contract edit you paste becomes potentially handed over as evidence if a dispute later goes to court.

- Paralegals: pasting your supervising lawyer's notes or drafts into a public AI tool may strip the attorney-client protection that came with them.

- Contract managers: the real check is what is written into the vendor's privacy contract, not what a setting toggle in the app appears to say.

What Lawyers Think Is Safer When They Use AI for Contract Work, and What Actually Is

Most “safer AI” advice quietly ends in the cloud. The standard explainer offers two options: consumer chatbots (bad), enterprise SaaS legal tools with zero data retention (good). That's true, and it's also missing a third column.

Using AI for contract drafting still leaks the underlying language unless the architecture itself stops the transmission. There are actually three options worth comparing, not two.

Consumer chatbot (no DPA)

The consumer tier is the failure case: paste-and-pray, with the provider's terms governing use. No DPA, no retention limits, no audit trail you control.

Enterprise SaaS with ZDR

Harvey's security page, for example: “We don't use inputs, outputs, or uploaded documents to train underlying models...Harvey contractually guarantees through our Platform Agreement that your data stays yours.” Meaningful, but still a trust-based promise about a contract on someone else's machine.

On-device AI

The contract is processed on the lawyer's Mac and never transmitted to any cloud, so there is no third party to subpoena, train on, or human review. The privacy guarantee is architectural, not contractual.

For more on this larger pattern, read the AI for sensitive data guide. AI adoption among AmLaw 200 law firms has run ahead of data handling practices, and AI innovation has outpaced data security expectations.

Treating AI as a drafting partner, not a permanent data processor, is the structural fix. Whether your inputs train the vendor's model, whether attorney-client protection has tightened (it has, after the Heppner ruling), whether a “we don't keep your data” promise is actually written into the contract: these are questions about where the data goes, not what features the tool has.

CLM Workflow Risks: Manual Review, Legal Accuracy, and Best Practices for Responsible AI

Going from a fifty-clause redline to a contract you can defend has not really changed since paper days. You still need a lawyer with judgement, a clean record of what changed, and an audit trail somebody else on your team can pull up six months later without guessing.

AI speeds up the boring parts. It also opens new gaps a human still has to close, and tools that move fast without leaking the underlying contract are rare. Reading every clause by hand is the legacy workaround, and on a busy desk it just stops happening past clause twenty.

Five rules keep legal teams both fast and defensible.

- Write down which AI tools your team is allowed to use, and default-deny the consumer chat tab. People follow rules they can see, not rules they have to guess at.

- Lock down the contract repository with appropriate security controls before you wire any AI integration into it. Don't bolt AI onto an open share.

- Check sector rules and intellectual property duties before the model sees a sensitive contract or NDA draft. GDPR or CCPA exposure tends to live in the documents you'd most want help with.

- Run a quarterly review of the contract portfolio, the integrations you rely on, and the data-use agreements behind them. Vendor terms drift, often quietly.

- Actually read the cloud AI terms of service, not just the marketing page. The training and retention clauses are where the work-product ends up.

Treat AI as a copilot that must be compliant by default, not a finished associate you can hand work to. A one-page responsible-AI policy covering data privacy, allowed routes, and a fast escalation path costs an afternoon and saves the conversation when something breaks.

Consumer endpoints fail on client confidentiality, full stop. The risk isn't theoretical — it's what every term-of-service line confirms when you read it carefully.

The Elephas Approach to AI Contract Management: On-Device AI for Contract Review

On-device AI is the only architecture where the contract never leaves the lawyer's Mac. Elephas is a privacy-friendly AI knowledge assistant for Mac, iPhone, and iPad.

Three building blocks for the workflow.

- Built-in local LLM models on the device. Elephas provides built-in local LLM models, no Ollama or external install required. A draft NDA, MSA, or SOW is reviewed entirely on-device, and the on-device path is the product default, not a power-user mode.

- Smart Redaction (beta) for the cloud calls you can't avoid. Sensitive data is automatically detected and redacted before anything reaches a cloud AI model, your content is never used to train AI models, and nothing passes through a third-party reviewer's screen.

- Bring your own model. Run Elephas with any cloud AI model using your own API keys — OpenAI, Claude, Perplexity, Gemini, Grok, and more. Elephas wraps the chosen model with privacy; it does not replace it.

What to Do Tomorrow Morning: A Practical Guide to Contract Management

Run this three-check sequence before you open any AI tab tomorrow. Pick the most sensitive deal document on your desk: a deal-closing binder, an MNDA with a strategic supplier, an offer letter with non-compete language, or a service-provider SOW.

Check 1: Route

Confirm whether the prompt actually runs on your device or in someone's cloud; if cloud, lock the no-retention clause in writing.

Check 2: Training

Verify whether the vendor trains on your inputs, with the toggle set the way you think it is.

Check 3: Evidence

Imagine the conversation surfacing in a subpoena tomorrow, and decide whether you'd be comfortable with what's in it.

The summary in three lines.

- The harm starts at the upload, not at some later data leak. The Heppner and Krafton rulings proved it.

- Cloud-only tools are trust-based: the contract has already left your laptop the moment you paste it.

- If you'd rather skip the trade-offs entirely, try Elephas — a privacy-friendly AI knowledge assistant for Mac with built-in local LLM models, so the next contract you would have pasted into a consumer chat tab at 11pm stays on your device instead.

Frequently Asked Questions

Can you safely upload contracts to ChatGPT or another consumer AI?

By default, no. Uploading a contract to a free chatbot can break the confidentiality clause inside the contract itself, strip away attorney-client protection (a February 2026 federal ruling, United States v. Heppner, confirmed this), and create a record that can be requested as evidence even after you delete the chat. Anthropic's privacy policy says inputs may be used to train models. Microsoft's consumer Copilot agreement says inputs are processed and stored. The safe paths are on-device AI or a paid enterprise plan with a signed data processing agreement and a written promise that nothing is retained.

Does pasting a contract into a consumer AI waive attorney-client privilege?

Yes, in most cases. In a February 2026 federal court ruling (United States v. Heppner), Judge Jed S. Rakoff held that ChatGPT-style chats are not protected by attorney-client privilege or by the protection that normally covers a lawyer's working notes, because the AI company is treated as an outside party that keeps everything you type. Anything you paste can later be requested as evidence if a dispute goes to court. The ruling has dominated legal news since February.

What do EU and California data protection laws say about uploading contracts to AI tools?

Europe's GDPR (Article 28) requires a formal data processing agreement with any service handling personal data. The free ChatGPT, Claude, and Gemini chat tabs do not offer one, so the upload itself is not compliant when the contract contains personal information. California's CCPA puts similar duties on businesses. The New York City Bar's AI task force adds that a 'do not train on my data' toggle is not, by itself, enough to meet a lawyer's ethics duty under ABA Model Rule 1.6.

Are there local AI tools that can review contracts offline?

Yes. On-device AI processes the contract entirely on the lawyer's Mac with built-in local LLM models, so the document never reaches a cloud provider. There is no third party to retain it, train on it, or be subpoenaed for it. Tools like Elephas pair a built-in local LLM with Smart Redaction (beta) for the cloud calls you can't avoid, so even hybrid workflows keep sensitive contract data private.

What are the best practices for using AI on contract work?

Set clear, written rules on AI across the team — name the approved tools, and block the free ChatGPT or Claude tab by default. Lock down access to where contracts are stored before wiring any AI tool into them. Check the rules of your industry and any intellectual property duties before letting an AI model see a sensitive contract. Review your contracts and AI integrations quarterly, including the privacy contracts behind every tool you use. And actually read the terms of service buried in cloud AI agreements, not just the marketing page.

Ready to review contracts without the upload risk?

Elephas runs locally on Mac with built-in local LLM models and Smart Redaction (beta) for the cloud calls you can't avoid. Your client's contract data never trains anyone's model.

Try Elephas for FreeRelated Resources

Explore all AI for Lawyers resourcesAI for Sensitive Data: A Complete Guide to Using AI Tools Without Exposing Sensitive Company Information (2026 Edition)

10 min readarticleIs ChatGPT Attorney-Client Privilege Protected? The Heppner Ruling and Generative AI in Litigation

11 min readarticleCan AI Tools Waive Attorney-Client Privilege? What Every Lawyer Must Know

14 min readcomparison7 Best Private AI Tools for Lawyers in 2026 (Local & Offline Options)

18 min readSources

- Sidley: Fortis Advisors v. Krafton — ChatGPT logs in $250M earnout opinion (Del. Ch. 2026-03-16)

- Harvard Law Review Blog: United States v. Heppner — privilege waiver for consumer chatbot exchanges (S.D.N.Y. 2026-02-17)

- Cyberhaven: 11% of data pasted into ChatGPT is confidential (1.6 million knowledge workers)

- ABA Formal Opinion 512 — first ethics guidance on generative AI tools (July 2024)

- Bloomberg Law: OpenAI ordered to produce 20 million ChatGPT logs in NYT copyright case