Claude Mythos Breach: Anthropic Lost Its Most Dangerous AI Model on Day One

On April 21, 2026, Bloomberg revealed that a private Discord group gained unauthorized access to Claude Mythos Preview within 24 hours of its launch. The attack vector: a shared credential from a worker at a third-party contractor for Anthropic, plus a URL pattern guess.

24 hrs

from launch to unauthorized access

1,000s

of zero-days found by Mythos

99%

of findings unpatched

12 + 40

Project Glasswing partners

Key Takeaways: Claude Mythos Breach

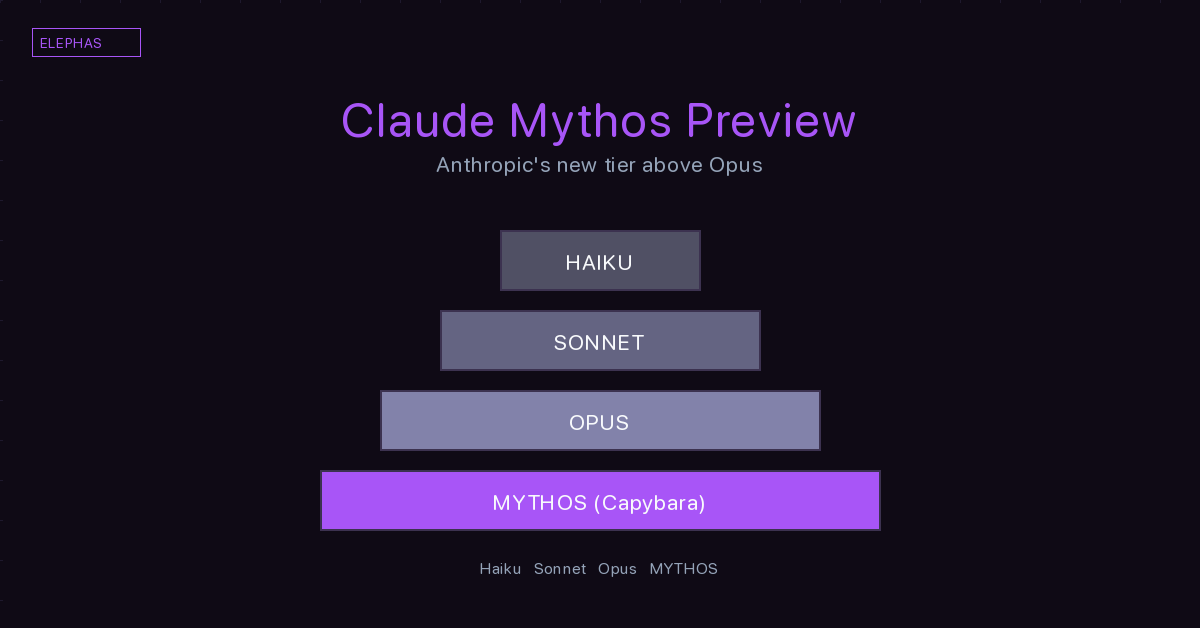

Claude Mythos Preview, Anthropic's most powerful AI model and a new tier above Claude Opus 4.7, was accessed without permission within 24 hours of its April 7, 2026 launch.

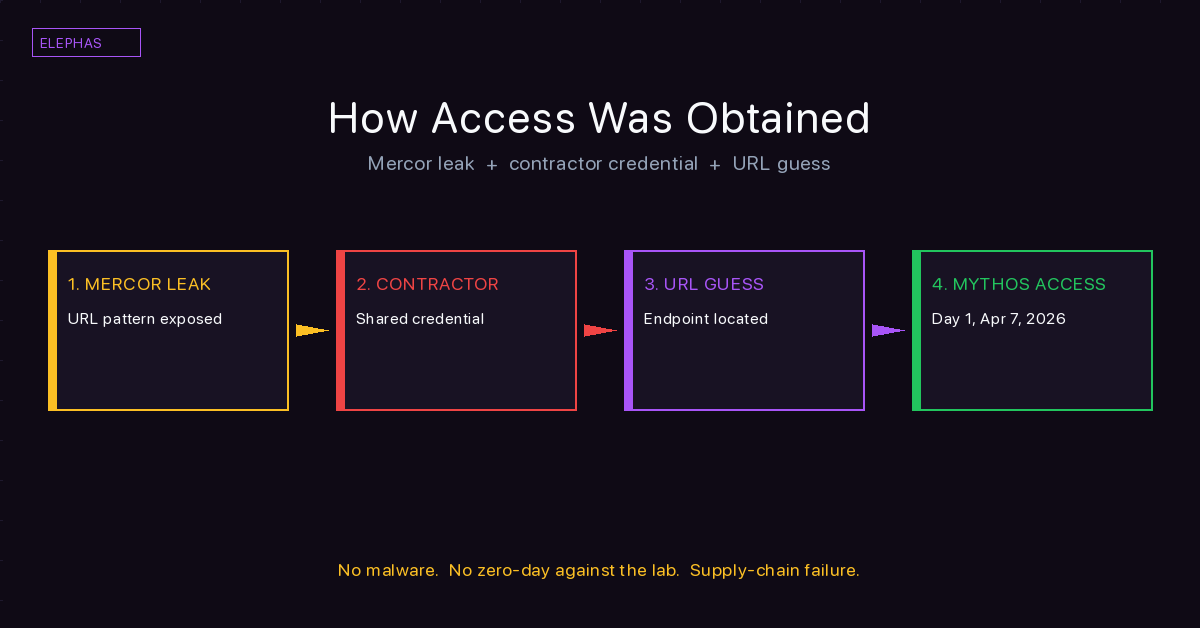

The breach used a shared credential from a worker at a third-party contractor for Anthropic, combined with a URL pattern guessed from a separate Mercor data leak. It was not a hack.

Claude Mythos has identified thousands of zero-day vulnerabilities across every major operating system and every major web browser, including a 27-year-old vulnerability in OpenBSD and a 17-year-old remote code execution vulnerability in FreeBSD.

Anthropic said 99% of the vulnerabilities Mythos found remain unpatched, placing agentic AI ahead of human remediation on the cybersecurity timeline.

Access is restricted to partners in an initiative called Project Glasswing, including AWS, Apple, Google, Microsoft, Nvidia, CrowdStrike, Palo Alto Networks, JPMorgan Chase, Broadcom, Cisco, and the Linux Foundation.

The real AI vulnerability is structural. Every contractor, vendor, and default-configured SaaS store between a user and a cloud AI tool is a reachable surface. Running AI locally on your Mac with Elephas removes those layers.

Inside the Claude Mythos Breach

The name "Mythos" comes from the Greek word for myth. Anthropic picked it for a model called Claude at the top of its lineup, one step above Opus. On April 7, 2026, the company announced Claude Mythos Preview, a system it said was too dangerous to release publicly. Within 24 hours a private Discord group had working access. They had been using it for two weeks when Bloomberg broke the story on April 21.

The incident was not a hack. A worker at a third-party contractor for Anthropic handed over a shared credential. The group then used internet sleuthing tools often employed by cybersecurity researchers to guess the URL, a format linked to Anthropic's naming conventions that had itself surfaced in a separate leak at Mercor, an AI training partner serving several frontier AI labs.

The Discord group told Bloomberg's source they were "playing around with new models, not wreaking havoc." Intent is not capability. And capability, for Mythos, includes finding bugs inside every cloud surface most professionals touch every day. So, what is the real risk here? Is it the unreleased model itself, or is it the supply chain between you and every cloud AI tool you touch? Let's walk through it.

What Is Claude Mythos Preview, the Most Powerful AI Model Anthropic Has Built

The mythos model sits above Claude Opus 4.7, Anthropic's current production flagship, in a tier the lab internally calls "Capybara." The company describes Mythos as the most powerful model it has ever built. Internal benchmarks back the framing. It scores 83.1% on CyberGym, a benchmark for vulnerability reproduction, against 66.6% for Claude Opus 4.6 on the same test. It hits 93.9% on SWE-bench Verified and 82.0% on Terminal-Bench 2.0.

External researchers Roy Paz and Alexandre Pauwels discovered a draft launch post in Anthropic's misconfigured internal store in March. The post described the system as strikingly capable at computer security tasks, a tier above anything the company had shipped before. That draft was publicly accessible for an unknown window before the researchers spotted it and reported to Fortune.

Mythos stands apart from a broadly available model like Claude on the consumer plans, or OpenAI's ChatGPT, or Google's Gemini language model. The company said the model is not slated for general release. Access is restricted to a small roster of security teams through a separate program.

Why Mythos Marks a Capability Step

The step to this tier is not a minor version bump. Mythos changes the arithmetic of how fast a software bug can be found, and by whom. What arrives next from every lab will likely follow the same curve.

How Access Was Obtained and Using Claude Without Permission

On the day Anthropic announced the model, a small Discord group found the model. They were part of a forum that hunts each new release a frontier lab ships. The group had two things working for them. A contractor employee provided a shared credential. A recent Mercor leak had exposed the URL pattern used for internal endpoints.

They combined the two. A shared API key plus a well-educated guess about where the model would live on the internet was all it took. Fewer than a dozen people have access to the system by Bloomberg's count, and they have kept it since the launch day.

Anthropic said it is "investigating a report claiming unauthorized access through one of our third-party vendor environments." The statement does not address what the group scraped, prompted, or documented during two weeks of using Mythos, a model the lab itself called too risky for release.

Mythos Without Permission and What the Group Has Been Doing

Bloomberg obtained screenshots and a live software demonstration from an insider. The group has been running general reasoning and code tasks, not attacks. The source's read matches the group's own description. Intent is one variable. The second is a system that has identified thousands of critical vulnerabilities sitting in private hands with no oversight.

What Mythos Can Do at AI Vulnerability Discovery Scale

The model has found zero-day vulnerabilities across every major operating system Anthropic tested, plus every major web browser on the market. The major operating system and web browser coverage is not partial. Each of those targets the red team set was hit end to end.

Among the vulnerabilities Mythos found, the named cases are striking. A 27-year-old vulnerability in OpenBSD in the network stack. A 17-year-old remote code execution vulnerability in FreeBSD. A 16-year-old flaw in FFmpeg that automated tools across five million test runs had never caught. The Linux kernel produced privilege escalation bugs of its own. In browser security work, the system chained four separate bugs into a sandbox escape that produced arbitrary code execution against a production target in the class of Firefox. It built 20-gadget ROP chains autonomously. Each finding could stand as its own Common Vulnerabilities and Exposures entry.

The capability was not engineered in. Anthropic explained the behavior "emerged as a downstream consequence of general improvements in code, reasoning, and autonomy." The lab did not set out to build an AI agent that automates penetration test work. It showed up as a side effect of scale, which raises the odds a working exploit could appear in the wrong hands with no one having to build one from scratch.

The deeper problem is timing. The lab said 99% of the findings have not yet been patched. A model that converts a fresh patch into a live exploit in hours shifts the attacker-defender balance in a way the security industry has not planned for. This is the first public signal that agentic AI has crossed the line where cyber offense outpaces human remediation.

When a Model Outruns Human Remediation

Remediation is human work. Humans read patches, write tests, and push rollouts through change control. A model like Mythos running against thousands of codebases in parallel outpaces that loop by orders of magnitude. When one side of a race speeds up and the other does not, the slower side loses ground every single day.

Project Glasswing and the Cyber Arms Race

Two weeks before the story broke, Anthropic launched an initiative called Project Glasswing. The stated intent was to put Mythos in the hands of defender organizations before attacker pools caught up with equivalent capabilities elsewhere. The lab committed $100 million in usage credits and another $4 million in donations to open-source software security foundations, including Alpha-Omega, OpenSSF, and the Apache Software Foundation.

Twelve organizations launched with seats: Amazon Web Services, Anthropic, Apple Inc., Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks. Another 40 organizations have preview seats beyond the headline twelve. The stated goal is to help critical software defenders raise their security posture before models with similar capability become broadly available.

Partners use it to find holes in their own stacks. CrowdStrike's chief technology officer Elia Zaitsev put the time pressure plainly: "The window between vulnerability discovery and exploitation has collapsed. What once took months now happens in minutes."

Why the Project Has Limits

Twelve named companies and 40 organizations cannot patch the internet. The model found bugs in operating systems shipped by vendors with no seat in the program. Another lab will ship equivalent capability inside a year. A rival will reach one too. The program is an opportunity to build the defender side first. It is not a containment plan.

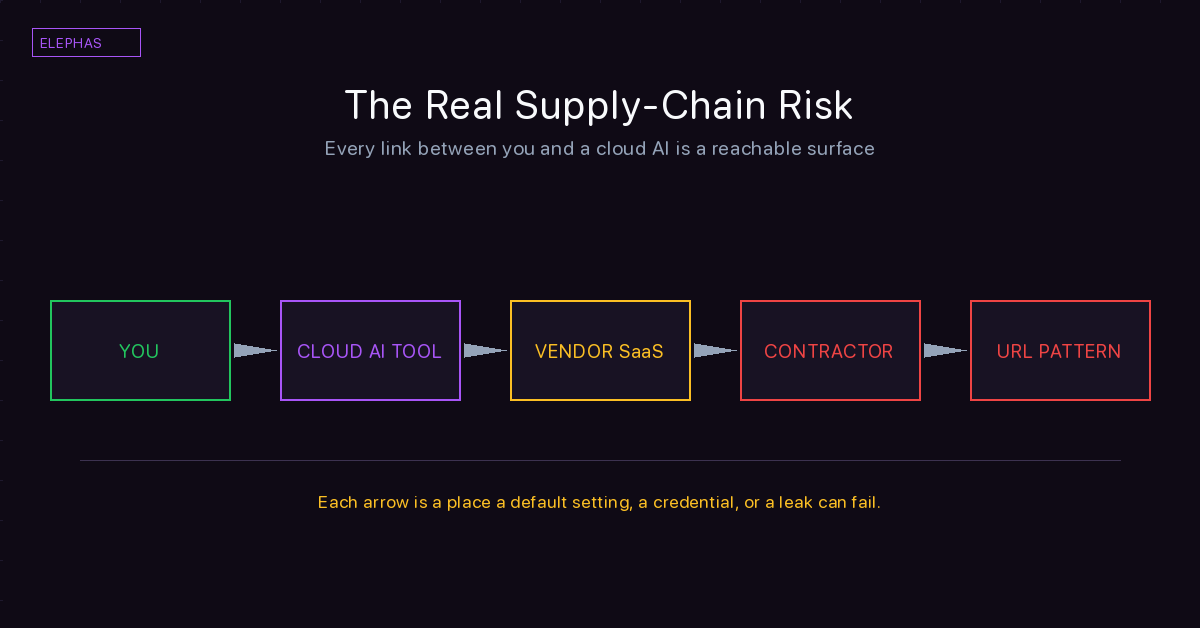

The Real AI Supply-Chain Risk

The earlier March leak mattered for a simple reason. Roughly 3,000 internal Anthropic documents sat inside the company's content management system for an unknown window because the default share setting had never been changed. The root cause was misconfiguration, not a hack. Zscaler summed it up in one line: "This wasn't a hack." A separate April incident saw parts of Claude Code source appear in a publicly indexed bucket through the same class of default-public settings.

Two Mythos-linked AI security incidents in four weeks. Neither was a skilled intrusion. One was a default-public cloud bucket. The other was a shared contractor credential plus a URL pattern guess. The pattern is structural. A similar vendor-chain failure showed up in the Lovable API breach, where thousands of projects leaked through a single misconfigured endpoint.

What this means for enterprise AI use is direct. Your prompts and uploads travel the same vendor stacks that failed here. Any AI tools you use hand data to some employee at some contractor at some partner you have never audited. Anthropic itself is in court over an unrelated supply-chain risk designation, yet its own chain still fails the basics. The fear is not hypothetical. It is documented.

What the Story Means for Your Confidential Data

The professionals this article is for are specific. Patent and IP lawyers paste claim language into cloud models to check novelty. Financial advisors paste client portfolios to draft commentary. Clinicians paste patient notes to summarize visits. Insurance underwriters paste medical histories to score risk. Each prompt is information you cannot practically audit end to end.

This story is the clean case that shows why. The most serious lab on the planet lost day-zero control of its most dangerous model through two defects that have nothing to do with the model itself. One defect was a default share setting. The other was a shared credential at a vendor. Those two defects describe the average enterprise SaaS stack.

What follows for a lawyer, a clinician, or an underwriter is direct. The data you paste does not stay where you pasted it. It travels through queues, caches, monitoring pipelines, contractor environments, and default-configured storage tiers. One documented case in four weeks tells you the rest.

Why Cloud AI and Confidentiality Do Not Mix by Default

Patent language leaking before a priority date is a career-ending failure. Patient notes leaking under HIPAA is a regulatory event. Portfolio detail reaching a rival advisor is a lawsuit. None of those are made safer by a vendor promise that your data will not be used for training.

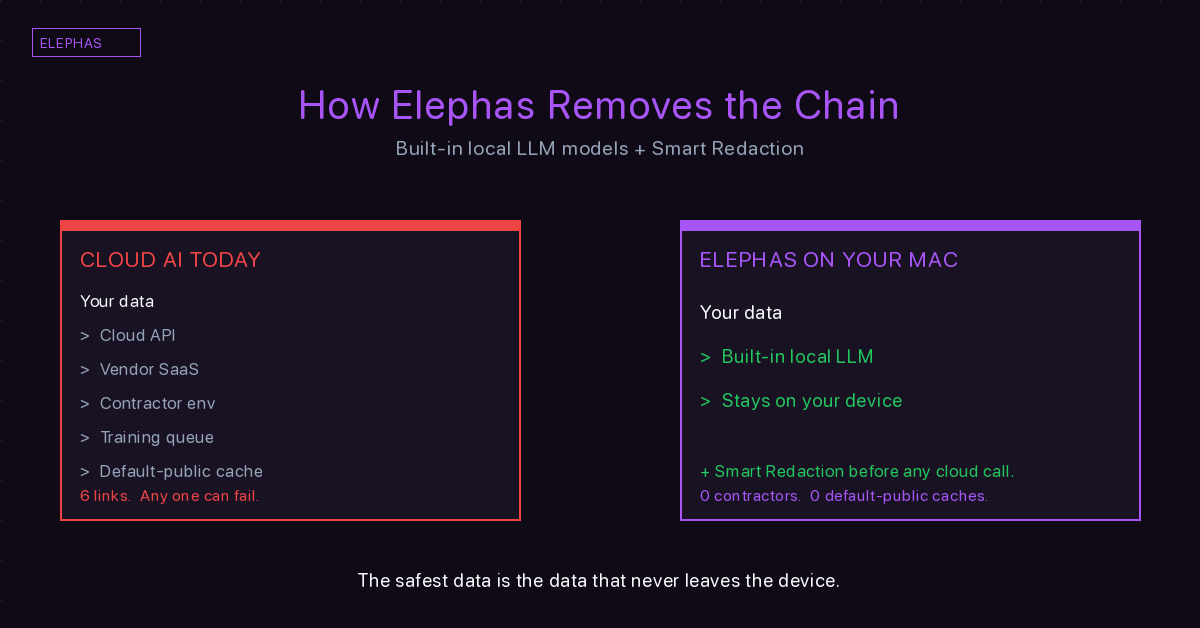

How Elephas Removes the Supply-Chain Risk

Elephas is a privacy-friendly AI knowledge assistant that runs on your Mac. Elephas provides built-in local LLM models. Your prompts and your documents are processed on the computer you already own. There is no contractor environment in the chain. There are no shared API keys. There is no default-public store to misconfigure. There is no URL pattern for a Discord forum to guess.

Elephas Smart Redaction brings an extra layer. Smart Redaction is an advanced feature that identifies confidential content such as names, emails, and sensitive data, and substitutes it with generic placeholders before sending anything to cloud models. For a patent lawyer checking claim novelty, the claim language never leaves the device. For a clinician summarizing a visit, patient identifiers never cross the HIPAA-compliant AI boundary. For an underwriter pricing risk, applicant medical histories stay inside the firm.

The design principle is simple. The safest data is the data that never leaves the device. Every layer you remove from the chain between your keyboard and the model is a layer that cannot fail the way Mythos Preview's chain failed.

Who Elephas Is Built For

Elephas users are the professionals for whom this story is not abstract. Patent and IP lawyers. Financial advisors and CPAs. Clinicians, therapists, and psychologists. Insurance underwriters. HR directors. Real estate professionals handling signed offers. Any role where a prompt carries a duty of care a standard cloud pipeline cannot honor on its own. For a fuller view of private AI tools, see the Elephas Resources guide.

Learn more at elephas.app.

The Bottom Line

Mythos is a symptom. The supply chain is the disease. The most dangerous model ever built was reachable inside 24 hours through a shared credential and an educated guess. That happened at a lab with one of the strongest security budgets in the world.

Your protection is not a better terms-of-service page from the next vendor. Your protection is fewer links in the chain. Data that stays on your Mac cannot be pulled by a worker you have never met. Language that never leaves your device cannot appear in a Discord screenshot next week. Prompts processed locally do not need to survive a misconfiguration at a lab you will never visit.

Every time you paste confidential text into a cloud tool, you trust a chain you cannot audit. This incident is the clearest evidence yet that the chain fails. Elephas is the alternative where the chain does not exist.

Stop your next incident from starting with an access grant

Elephas is the privacy-friendly AI knowledge assistant with built-in local LLM models. Smart Redaction keeps sensitive data on your Mac.

Try Elephas →Related Reading

- AI Security Incidents: The Ongoing Tracker

- Anthropic Leaked Their Source Code Twice in One Week

- The OpenAI Axios Supply Chain Compromise

- The Earlier March Claude Mythos Leak

- AI Tools That Keep Client Data Private

- The AI Privacy and Security Hub

- ChatGPT Launches Ads as Privacy Researcher Resigns from OpenAI